Fellow science communicators, think you can explain everything that goes on in your field? If so, I have a challenge for you. Pick a day, and go through all the new papers on arXiv.org in a single area. For each one, try to give a general-audience explanation of what the paper is about. To make it easier, you can ignore cross-listed papers. If your field doesn’t use arXiv, consider if you can do the challenge with another appropriate site.

I’ll start. I’m looking at papers in the “High Energy Physics – Theory” area, announced 6 Jan, 2022. I’ll warn you in advance that I haven’t read these papers, just their abstracts, so apologies if I get your paper wrong!

arXiv:2201.01303 : Holographic State Complexity from Group Cohomology

This paper says it is a contribution to a Proceedings. That means it is based on a talk given at a conference. In my field, a talk like this usually won’t be presenting new results, but instead summarizes results in a previous paper. So keep that in mind.

There is an idea in physics called holography, where two theories are secretly the same even though they describe the world with different numbers of dimensions. Usually this involves a gravitational theory in a “box”, and a theory without gravity that describes the sides of the box. The sides turn out to fully describe the inside of the box, much like a hologram looks 3D but can be printed on a flat sheet of paper. Using this idea, physicists have connected some properties of gravity to properties of the theory on the sides of the box. One of those properties is complexity: the complexity of the theory on the sides of the box says something about gravity inside the box, in particular about the size of wormholes. The trouble is, “complexity” is a bit subjective: it’s not clear how to give a good definition for it for this type of theory. In this paper, the author studies a theory with a precise mathematical definition, called a topological theory. This theory turns out to have mathematical properties that suggest a well-defined notion of complexity for it.

arXiv:2201.01393 : Nonrelativistic effective field theories with enhanced symmetries and soft behavior

We sometimes describe quantum field theory as quantum mechanics plus relativity. That’s not quite true though, because it is possible to define a quantum field theory that doesn’t obey special relativity, a non-relativistic theory. Physicists do this if they want to describe a system moving much slower than the speed of light: it gets used sometimes for nuclear physics, and sometimes for modeling colliding black holes.

In particle physics, a “soft” particle is one with almost no momentum. We can classify theories based on how they behave when a particle becomes more and more soft. In normal quantum field theories, if they have special behavior when a particle becomes soft it’s often due to a symmetry of the theory, where the theory looks the same even if something changes. This paper shows that this is not true for non-relativistic theories: they have more requirements to have special soft behavior, not just symmetry. They “bootstrap” a few theories, using some general restrictions to find them without first knowing how they work (“pulling them up by their own bootstraps”), and show that the theories they find are in a certain sense unique, the only theories of that kind.

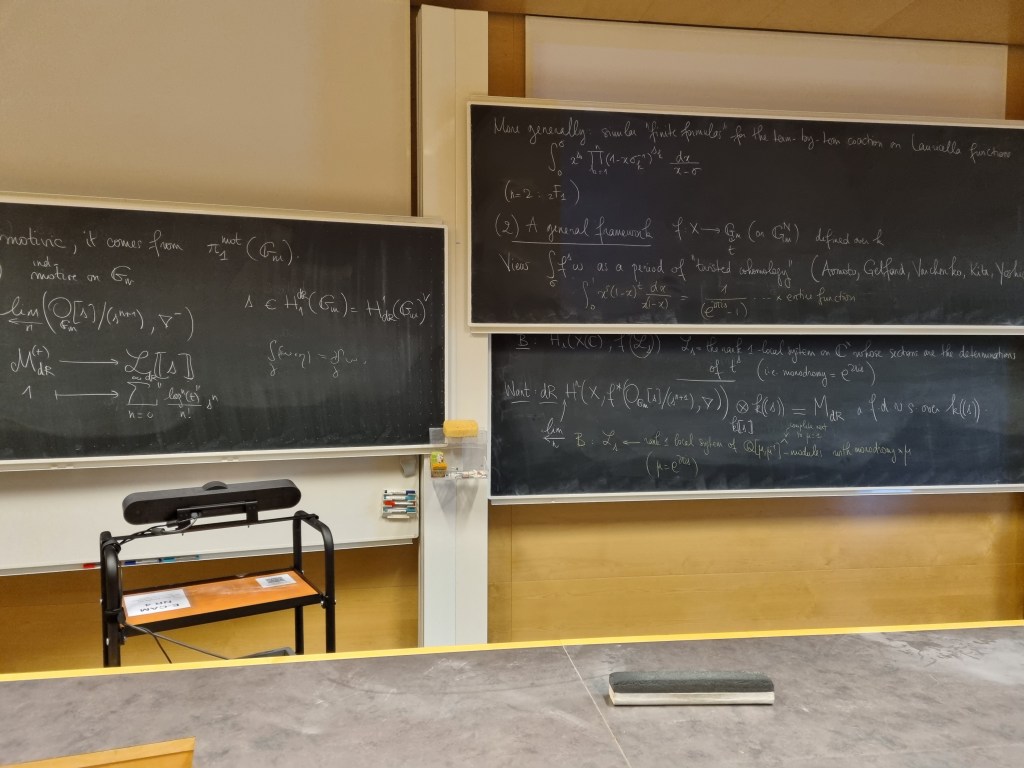

arXiv:2201.01552 : Transmutation operators and expansions for 1-loop Feynman integrands

In recent years, physicists in my sub-field have found new ways to calculate the probability that particles collide. One of these methods describes ordinary particles in a way resembling string theory, and from this discovered a whole “web” of theories that were linked together by small modifications of the method. This method originally worked only for the simplest Feynman diagrams, the “tree” diagrams that correspond to classical physics, but was extended to the next-simplest diagrams, diagrams with one “loop” that start incorporating quantum effects.

This paper concerns a particular spinoff of this method, that can find relationships between certain one-loop calculations in a particularly efficient way. It lets you express calculations of particle collisions in a variety of theories in terms of collisions in a very simple theory. Unlike the original method, it doesn’t rely on any particular picture of how these collisions work, either Feynman diagrams or strings.

arXiv:2201.01624 : Moduli and Hidden Matter in Heterotic M-Theory with an Anomalous U(1) Hidden Sector

In string theory (and its more sophisticated cousin M theory), our four-dimensional world is described as a world with more dimensions, where the extra dimensions are twisted up so that they cannot be detected. The shape of the extra dimensions influences the kinds of particles we can observe in our world. That shape is described by variables called “moduli”. If those moduli are stable, then the properties of particles we observe would be fixed, otherwise they would not be. In general it is a challenge in string theory to stabilize these moduli and get a world like what we observe.

This paper discusses shapes that give rise to a “hidden sector”, a set of particles that are disconnected from the particles we know so that they are hard to observe. Such particles are often proposed as a possible explanation for dark matter. This paper calculates, for a particular kind of shape, what the masses of different particles are, as well as how different kinds of particles can decay into each other. For example, a particle that causes inflation (the accelerating expansion of the universe) can decay into effects on the moduli and dark matter. The paper also shows how some of the moduli are made stable in this picture.

arXiv:2201.01630 : Chaos in Celestial CFT

One variant of the holography idea I mentioned earlier is called “celestial” holography. In this picture, the sides of the box are an infinite distance away: a “celestial sphere” depicting the angles particles go after they collide, in the same way a star chart depicts the angles between stars. Recent work has shown that there is something like a sensible theory that describes physics on this celestial sphere, that contains all the information about what happens inside.

This paper shows that the celestial theory has a property called quantum chaos. In physics, a theory is said to be chaotic if it depends very precisely on its initial conditions, so that even a small change will result in a large change later (the usual metaphor is a butterfly flapping its wings and causing a hurricane). This kind of behavior appears to be present in this theory.

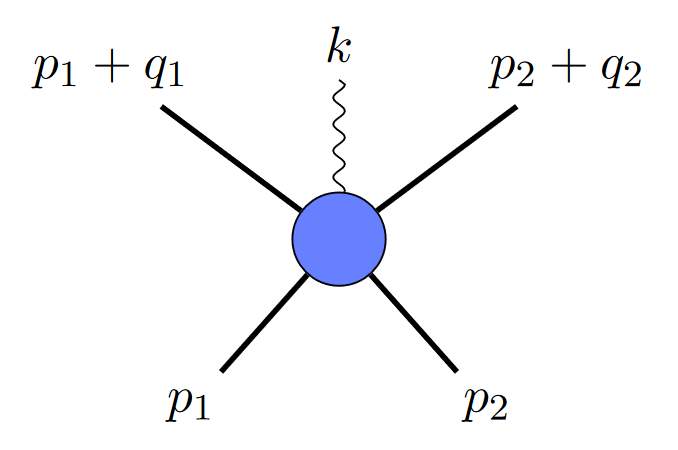

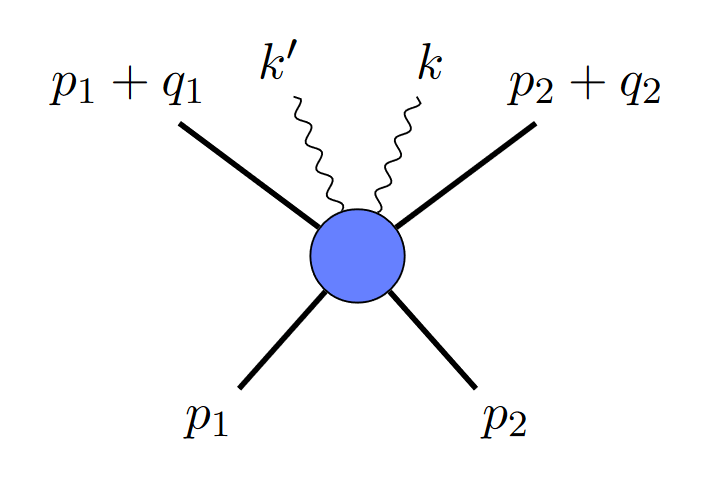

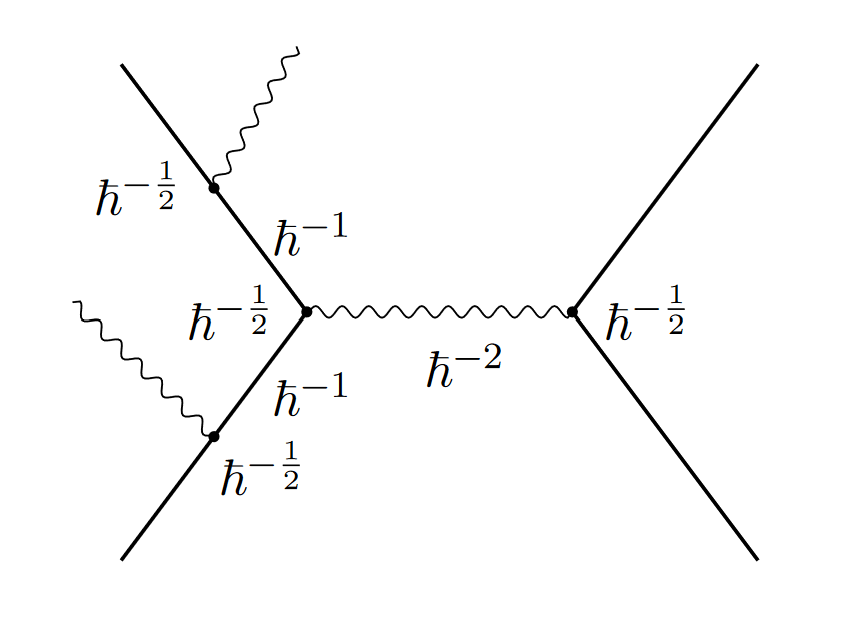

arXiv:2201.01657 : Calculations of Delbrück scattering to all orders in αZ

Delbrück scattering is an effect where the nuclei of heavy elements like lead can deflect high-energy photons, as a consequence of quantum field theory. This effect is apparently tricky to calculate, and previous calculations have involved approximations. This paper finds a way to calculate the effect without those approximations, which should let it match better with experiments.

(As an aside, I’m a little confused by the claim that they’re going to all orders in αZ when it looks like they just consider one-loop diagrams…but this is probably just my ignorance, this is a corner of the field quite distant from my own.)

arXiv:2201.01674 : On Unfolded Approach To Off-Shell Supersymmetric Models

Supersymmetry is a relationship between two types of particles: fermions, which typically make up matter, and bosons, which are usually associated with forces. In realistic theories this relationship is “broken” and the two types of particles have different properties, but theoretical physicists often study models where supersymmetry is “unbroken” and the two types of particles have the same mass and charge. This paper finds a new way of describing some theories of this kind that reorganizes them in an interesting way, using an “unfolded” approach in which aspects of the particles that would normally be combined are given their own separate variables.

(This is another one I don’t know much about, this is the first time I’d heard of the unfolded approach.)

arXiv:2201.01679 : Geometric Flow of Bubbles

String theorists have conjectured that only some types of theories can be consistently combined with a full theory of quantum gravity, others live in a “swampland” of non-viable theories. One set of conjectures characterizes this swampland in terms of “flows” in which theories with different geometry can flow in to each other. The properties of these flows are supposed to be related to which theories are or are not in the swampland.

This paper writes down equations describing these flows, and applies them to some toy model “bubble” universes.

arXiv:2201.01697 : Graviton scattering amplitudes in first quantisation

This paper is a pedagogical one, introducing graduate students to a topic rather than presenting new research.

Usually in quantum field theory we do something called “second quantization”, thinking about the world not in terms of particles but in terms of fields that fill all of space and time. However, sometimes one can instead use “first quantization”, which is much more similar to ordinary quantum mechanics. There you think of a single particle traveling along a “world-line”, and calculate the probability it interacts with other particles in particular ways. This approach has recently been used to calculate interactions of gravitons, particles related to the gravitational field in the same way photons are related to the electromagnetic field. The approach has some advantages in terms of simplifying the results, which are described in this paper.