You’ve probably seen it somewhere on your facebook feed, likely shared by a particularly wide-eyed friend: pi found hidden in the hydrogen atom!

From the headlines, this sounds like some sort of kabbalistic nonsense, like finding the golden ratio in random pictures.

Read the actual articles, and the story is a bit more reasonable. The last two I linked above seem to be decent takes on it, they’re just saddled with ridiculous headlines. As usual, I blame the editors. This time, they’ve obscured an interesting point about the link between physics and mathematics.

So what does “pi found hidden in the hydrogen atom” actually mean?

It doesn’t mean that there’s some deep importance to the number pi in nature, beyond its relevance in mathematics in general. The reason that pi is showing up here isn’t especially deep.

It isn’t trivial either, though. I’ve seen a few people whose first response to this article was “of course they found pi in the hydrogen atom, hydrogen atoms are spherical!” That’s not what’s going on here. The connection isn’t about the shape of the hydrogen atom, it’s about one particular technique for estimating its energy.

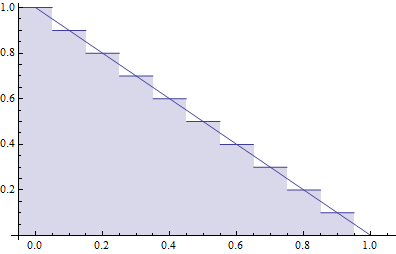

Carl Hagen is a physicist at the University of Rochester who was teaching a quantum mechanics class in which he taught a well-known approximation technique called the variational principle. Specifically, he had his students apply this technique to the hydrogen atom. The nice thing about the hydrogen atom is that it’s one of the few atoms simple enough that it’s possible to find its energy levels exactly. The exact calculation can then be compared to the approximation.

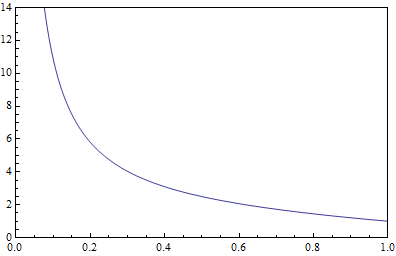

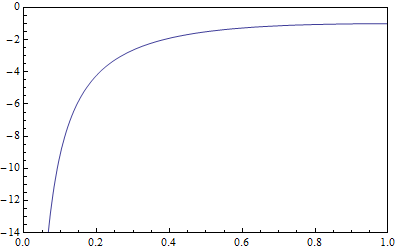

What Hagen noticed was that this approximation was surprisingly good, especially for high energy states for which it wasn’t expected to be. In the end, working with Rochester math professor Tamar Friedmann, he figured out that the variational principle was making use of a particular identity between a type of mathematical functions, called Gamma functions, that are quite common in physics. Using those Gamma functions, the two researchers were able to re-derive what turned out to be a 17th century formula for pi, giving rise to a much cleaner proof for that formula than had been known previously.

So pi isn’t appearing here because “the hydrogen atom is a sphere”. It’s appearing because pi appears all over the place in physics, and because in general, the same sorts of structures appear again and again in mathematics.

Pi’s appearance in the hydrogen atom is thus not very special, regardless. What is a little bit special is the fact that, using the hydrogen atom, these folks were able to find a cleaner proof of an old approximation for pi, one that mathematicians hadn’t found before.

That, if anything, is the interesting part of this news story, but it’s also part of a broader trend, one in which physicists provide “physics proofs” for mathematical results. One of the more famous accomplishments of string theory is a class of “physics proofs” of this sort, using a principle called mirror symmetry.

The existence of “physics proofs” doesn’t mean that mathematics is secretly constrained by the physical world. Rather, they’re a result of the fact that physicists are interested in different aspects of mathematics, and in general are a bit more reckless in using approximations that haven’t been mathematically vetted. A physicist can sometimes prove something in just a few lines that mathematicians would take many pages to prove, but usually they do this by invoking a structure that would take much longer for a mathematician to define. As physicists, we’re building on the shoulders of other physicists, using concepts that mathematicians usually don’t have much reason to bother with. That’s why it’s always interesting when we find something like the Amplituhedron, a clean mathematical concept hidden inside what would naively seem like a very messy construction. It’s also why “physics proofs” like this can happen: we’re dealing with things that mathematicians don’t naturally consider.

So please, ignore the pi-in-the-sky headlines. Some physicists found a trick, some mathematicians found it interesting, the hydrogen atom was (quite tangentially) involved…and no nonsense needs to be present.