My sub-field isn’t big on philosophical debates. We don’t tend to get hung up on how to measure an infinite universe, or in arguing about how to interpret quantum mechanics. Instead, we develop new calculation techniques, which tends to nicely sidestep all of that.

If there’s anything we do get philosophical about, though, any question with a little bit of ambiguity, it’s this: What counts as an analytic result?

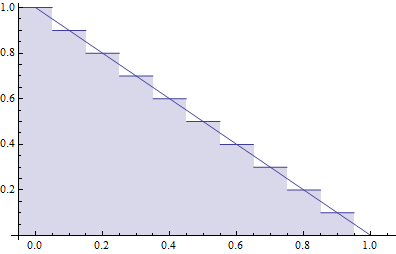

“Analytic” here is in contrast to “numerical”. If all we need is a number and we don’t care if it’s slightly off, we can use numerical methods. We have a computer use some estimation trick, repeating steps over and over again until we have approximately the right answer.

“Analytic”, then, refers to everything else. When you want an analytic result, you want something exact. Most of the time, you don’t just want a single number: you want a function, one that can give you numbers for whichever situation you’re interested in.

It might sound like there’s no ambiguity there. If it’s a function, with sines and cosines and the like, then it’s clearly analytic. If you can only get numbers out through some approximation, it’s numerical. But as the following example shows, things can get a bit more complicated.

Suppose you’re trying to calculate something, and you find the answer is some messy integral. Still, you’ve simplified the integral enough that you can do numerical integration and get some approximate numbers out. What’s more, you can express the integral as an infinite series, so that any finite number of terms will get close to the correct result. Maybe you even know a few special cases, situations where you plug specific numbers in and you do get an exact answer.

It might sound like you only know the answer numerically. As it turns out, though, this is roughly how your computer handles sines and cosines.

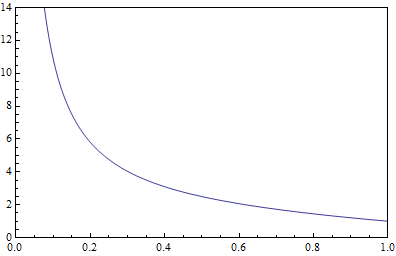

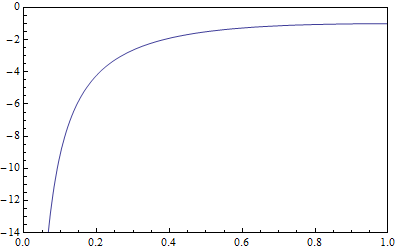

When your computer tries to calculate a sine or a cosine, it doesn’t have access to the exact solution all of the time. It does have some special cases, but the rest of the time it’s using an infinite series, or some other numerical trick. Type in a random sine into your calculator and it will be just as approximate as if you did a numerical integration.

So what’s the real difference?

Rather than how we get numbers out, think about what else we know. We know how to take derivatives of sines, and how to integrate them. We know how to take limits, and series expansions. And we know their relations to other functions, including how to express them in terms of other things.

If you can do that with your integral, then you’ve probably got an analytic result. If you can’t, then you don’t.

What if you have only some of the requirements, but not the others? What if you can take derivatives, but don’t know all of the identities between your functions? What if you can do series expansions, but only in some limits? What if you can do all the above, but can’t get numbers out without a supercomputer?

That’s where the ambiguity sets in.

In the end, whether or not we have the full analytic answer is a matter of degree. The closer we can get to functions that mathematicians have studied and understood, the better grasp we have of our answer and the more “analytic” it is. In practice, we end up with a very pragmatic approach to knowledge: whether we know the answer depends entirely on what we can do with it.