I am a high energy physicist who uses the high energy and low energy limits of a theory that, while valid up to high energies, is also a low-energy description of what at high energies ends up being string theory (string theorists, of course, being high energy physicists as well).

If all of that makes no sense to you, congratulations, you’ve stumbled upon one of the worst-kept secrets of theoretical physics: we really could use a thesaurus.

“High energy” means different things in different parts of physics. In general, “high” versus “low” energy classifies what sort of physics you look at. “High” energy physics corresponds to the very small, while “low” energies encompass larger structures. Many people explain this via quantum mechanics: the uncertainty principle says that the more certain you are of a particle’s position, the less certain you can be of how fast it is going, which would imply that a particle that is highly restricted in location might have very high energy. You can also understand it without quantum mechanics, though: if two things are held close together, it generally has to be by a powerful force, so the bond between them will contain more energy. Another perspective is in terms of light. Physicists will occasionally use “IR”, or infrared, to mean “low energy” and “UV”, or ultraviolet, to mean “high energy”. Infrared light has long wavelengths and low energy photons, while ultraviolet light has short wavelengths and high energy photons, so the analogy is apt. However, the analogy only goes so far, since “UV physics” is often at energies much greater than those of UV light (and the same sort of situation applies for IR).

So what does “low energy” or “high energy” mean? Well…

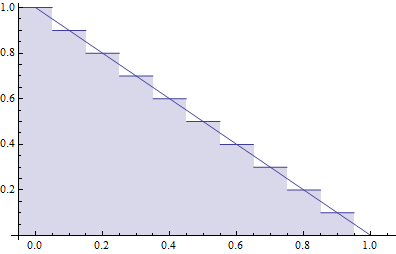

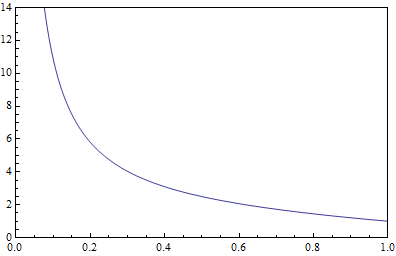

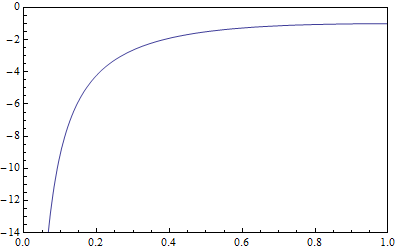

The IR limit: Lowest of the “low energy” points, this refers to the limit of infinitely low energy. While you might compare it to “absolute zero”, really it just refers to energy that’s so low that compared to the other energies you’re calculating with it might as well be zero. This is the “low energy limit” I mentioned in the opening sentence.

Low energy physics: Not “high energy physics”. Low energy physics covers everything from absolute zero up to atoms. Once you get up to high enough energy to break up the nucleus of an atom, you enter…

High energy physics: Also known as “particle physics”, high energy physics refers to the study of the subatomic realm, which also includes objects which aren’t technically particles like strings and “branes”. If you exclude nuclear physics itself, high energy physics generally refers to energies of a mega-electron-volt and up. For comparison, the electrons in atoms are bound by energies of around an electron-volt, which is the characteristic energy of chemistry, so high energy physics is at least a million times more energetic. That said, high energy physicists are often interested in low energy consequences of their theories, including all the way down to the IR limit. Interestingly, by this point we’ve already passed both infrared light (from a thousandth of an electron-volt to a single electron volt) and ultraviolet light (several electron-volts to a hundred or so). Compared to UV light, mega-electron volt scale physics is quite high energy.

The TeV scale: If you’re operating a collider though, mega-electron-volts (or MeV) are low-energy physics. Often, calculations for colliders will assume that quarks, whose masses are around the MeV scale, actually have no mass at all! Instead, high energy for particle colliders means giga (billion) or tera (trillion) electron volt processes. The LHC, for example, operates at around 7 TeV now, with 14 TeV planned. This is the range of scales where many had hoped to see supersymmetry, but as time has gone on results have pushed speculation up to higher and higher energies. Of course, these are all still low energy from the perspective of…

The string scale: Strings are flexible, but under enormous tension that keeps them very very short. Typically, strings are posed to be of length close to the Planck length, the characteristic length at which quantum effects become relevant for gravity. This enormously small length corresponds to the enormously large Planck energy, which is on the order of 1028 electron-volts. That’s about ten to the sixteen times the energies of the particles at the LHC, or ten to the twenty-two times the MeV scale that I called “high energy” earlier. For comparison, there are about ten to the twenty-two atoms in a milliliter of water. When extra dimensions in string theory are curled up, they’re usually curled up at this scale. This means that from a string theory perspective, going to the TeV scale means ignoring the high energy physics and focusing on low energy consequences, which is why even the highest mass supersymmetric particles are thought of as low energy physics when approached from string theory.

The UV limit: Much as the IR limit is that of infinitely low energy, the UV limit is the formal limit of infinitely high energy. Again, it’s not so much an actual destination, as a comparative point where the energy you’re considering is much higher than the energy of anything else in your calculation.

These are the definitions of “high energy” and “low energy”, “UV” and “IR” that one encounters most often in theoretical particle physics and string theory. Other parts of physics have their own idea of what constitutes high or low energy, and I encourage you to ask people who study those parts of physics if you’re curious.