It’s been a weird year.

It’s been a weird year for many reasons, of course. But it’s been a particularly weird year for this blog.

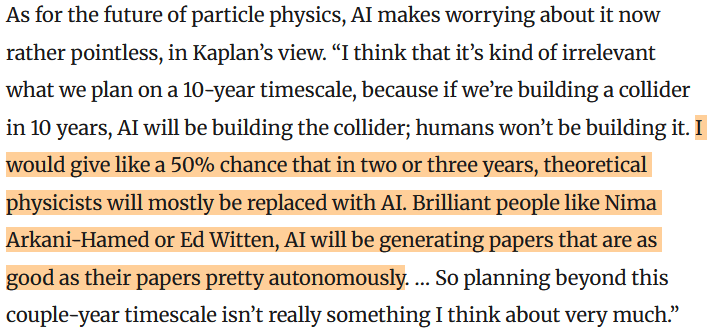

To start, let me show you a more normal year, 2024:

Aside from a small uptick in January due to a certain unexpected announcement, this was a pretty typical year. I got 70-80 thousand views from 30-40 thousand unique visitors, spread fairly evenly throughout the year.

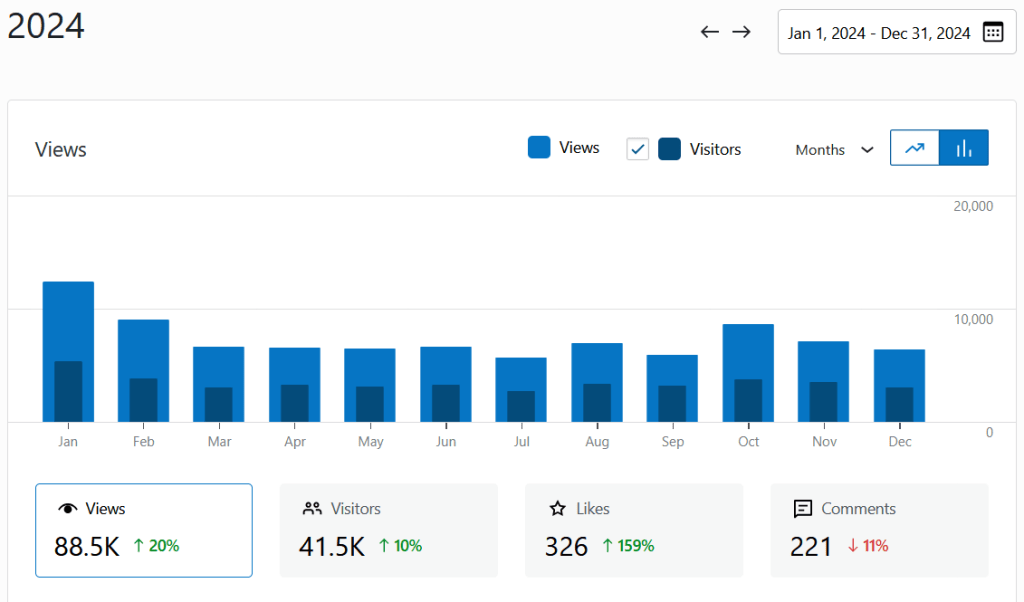

Now, take a look at 2025:

Something started happening this Fall. I went from getting 6000 views and 3000 visitors in a typical month, to roughly quintupling those numbers.

And for the life of me, I can’t figure out why.

WordPress, the site that hosts this blog, gives me tools to track where my viewers are coming from, and what they’re seeing.

It gives me a list of “referrers”, the other websites where people click on links to mine. Normally, this shows me where people are coming from: if I came up on a popular blog or reddit post, and people are following a link here. This year, though, looks totally normal. No new site is referring these people to me. Either the site they’re coming from is hidden, or they’re typing in my blog’s address by hand.

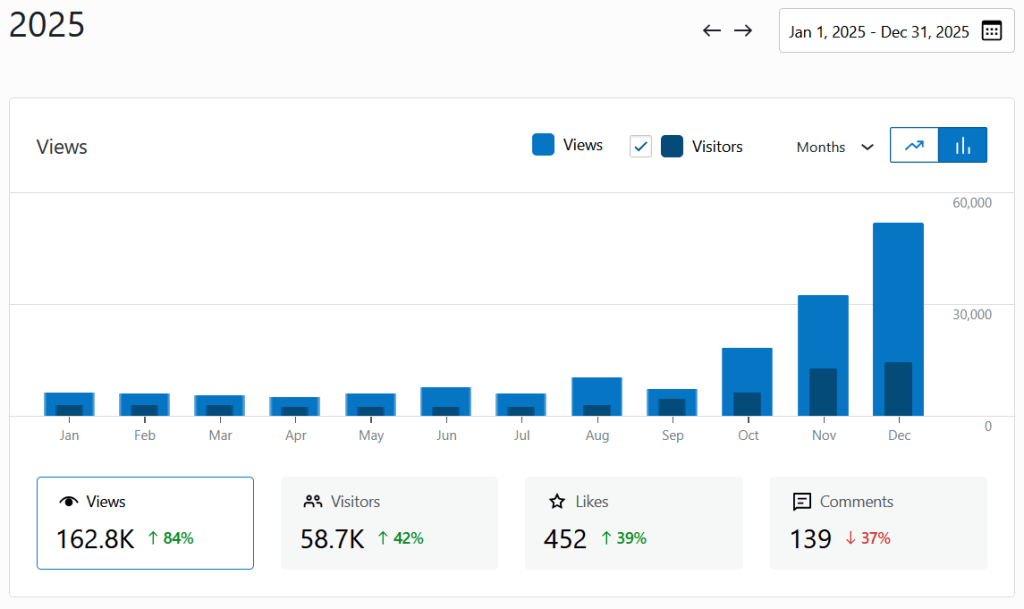

Looking at countries tells me a bit more. In a typical year, I get a bit under half of my views from the US, and the rest from a smattering of other English-speaking or European countries. This year, here’s what those stats look like:

So that tells me something. The new views appear to be coming from China. And what are these new viewers reading?

This year, my top post is a post from 2021, Reality as an Algebra of Observables. It wasn’t particularly popular when it came out, and while I liked the idea behind it, I don’t think I wrote it all that well. It’s not something that suddenly became relevant to the news, or to pop culture. It just suddenly started getting more and more and more views, this Fall:

In second place, a post about the 2022 Nobel Prize follows the same pattern. The pattern continues for a bit, but eventually the posts views get more uniform. My post France for Non-EU Spouses of EU Citizens, for example, has no weird pattern of increasing views: it’s just popular.

So far, this is weird. It gets weirder.

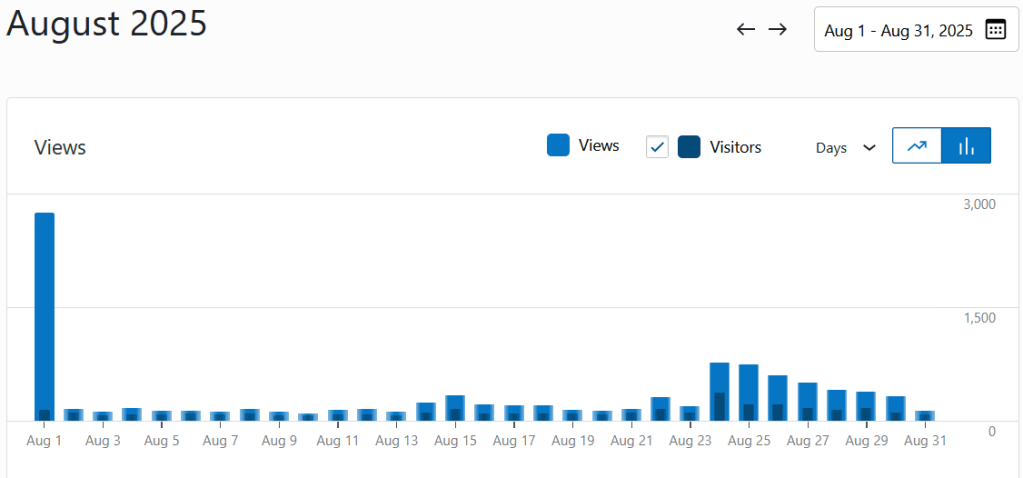

On a lark, I decided to look at the day-by-day statistics, rather than month-by-month. And before the growth really starts to show, I noticed something very strange.

In August, I had a huge number of views on August 1, a third of the month in one day. I had a new post out that day, but that post isn’t the one that gets the most views. Instead…it’s Reality as an Algebra of Observables.

That huge peak is a bit different from the later growth, though. It only shows in views, not in number of visitors. And it’s from the US, not China.

September, in comparison, looks normal. October looks like August, with a huge peak on October 3. This time, most of the views are still from the US, but a decent number are from China, and the visitors number is also higher.

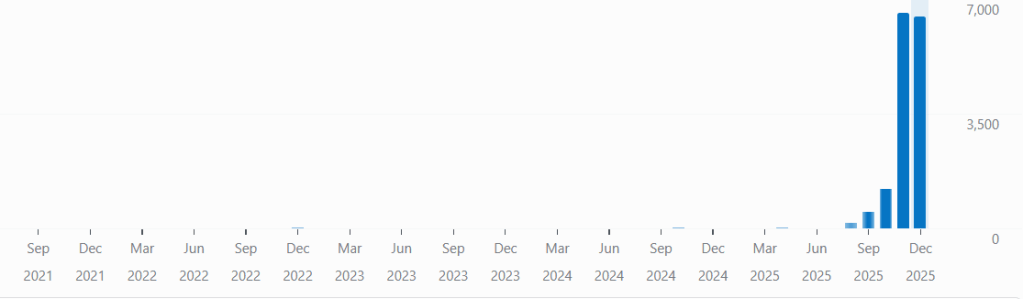

In November, a few days in to the month, a new pattern kicks in:

Now, visitors and views are almost equal, as if each visitor shows up, looks at precisely one post, and leaves. The views are overwhelmingly from China, with 27 thousand out of 32 thousand views. And the most popular post, more popular even than my conveniently named 4gravitons.com homepage that usually tops the ratings…is Reality as an Algebra of Observables.

I don’t know what’s going on here, and I welcome speculation. Is this some extremely strange bot, accessing one unremarkable post of mine from a huge number of Chinese IP addresses? Or are there actual people reading this post? Was it shared on a Chinese social media app that WordPress can’t track? Maybe it’s part of a course?

For a while, I’d thought that if I somehow managed to get a lot more views, I could consider monetizing in some way, like opening a Patreon. History blogger Brett Deveraux gets around 140 thousand views on his top posts, and makes about three-quarters of his income from Patreon. If I could get a post a tenth as popular as his, maybe I could start making a little money from this blog?

The thing is, I can only do that if I have some idea of who’s viewing the blog, and what they want. And I don’t know why they want Reality as an Algebra of Observables.