I was recently reminded that Michio Kaku exists.

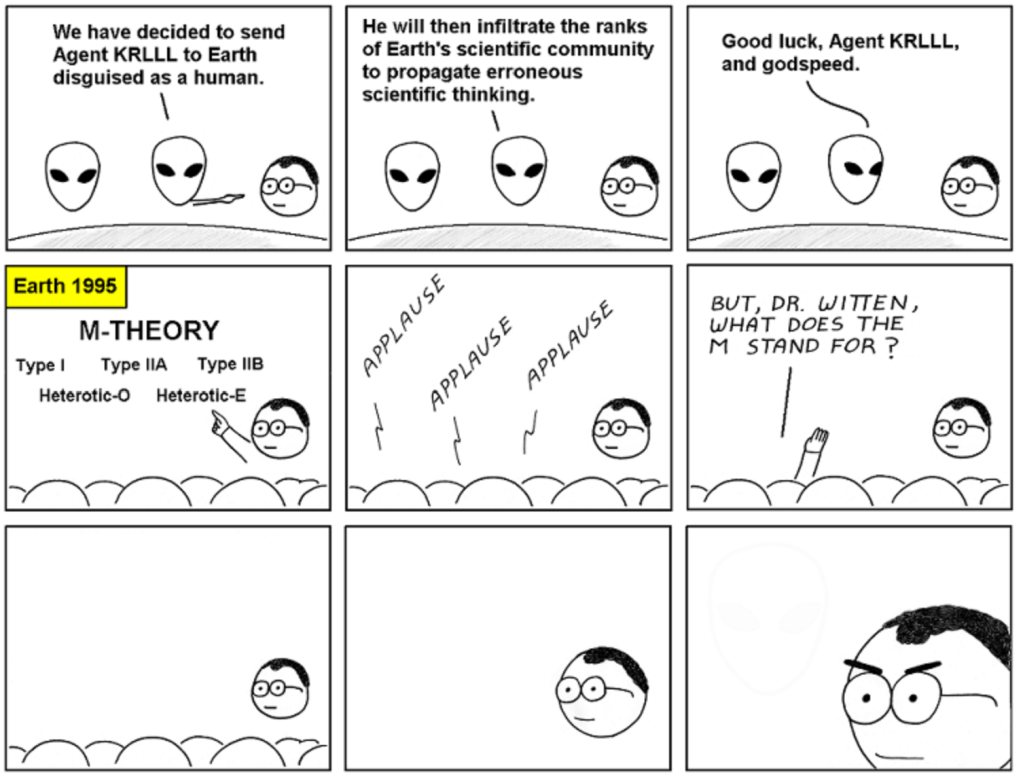

In the past, Michio Kaku made important contributions to string theory, but he’s best known for what could charitably be called science popularization. He’s an excited promoter of physics and technology, but that excitement often strays into inaccuracy. Pretty much every time I’ve heard him mentioned, it’s for some wildly overenthusiastic statement about physics that, rather than just being simplified for a general audience, is generally flat-out wrong, conflating a bunch of different developments in a way that makes zero actual sense.

Michio Kaku isn’t unique in this. There’s a whole industry in making nonsense statements about science, overenthusiastic books and videos hinting at science fiction or mysticism. Deepak Chopra is a famous figure from deeper on this spectrum, known for peddling loosely quantum-flavored spirituality.

There was a time I was worried about this kind of thing. Super-popular misinformation is the bogeyman of the science popularizer, the worry that for every nice, careful explanation we give, someone else will give a hundred explanations that are way more exciting and total baloney. Somehow, though, I hear less and less from these people over time, and thus worry less and less about them.

Should I be worried more? I’m not sure.

Are these people less popular than they used to be? Is that why I’m hearing less about them? Possibly, but I’d guess not. Michio Kaku has eight hundred thousand twitter followers. Deepak Chopra has three million. On the other hand, the usually-careful Brian Greene has a million followers, and Neil deGrasse Tyson, where the worst I’ve heard is that he can be superficial, has fourteen million.

(But then in practice, I’m more likely to reflect on content with even smaller audiences.)

If misinformation is this popular, shouldn’t I be doing more to combat it?

Popular misinformation is also going to be popular among critics. For every big-time nonsense merchant, there are dozens of people breaking down and debunking every false statement they say, every piece of hype they release. Often, these people will end up saying the same kinds of things over and over again.

If I can be useful, I don’t think it will be by saying the same thing over and over again. I come up with new metaphors, new descriptions, new explanations. I clarify things others haven’t clarified, I clear up misinformation others haven’t addressed. That feels more useful to me, especially in a world where others are already countering the big problems. I write, and writing lasts, and can be used again and again when needed. I don’t need to keep up with the Kakus and Chopras of the world to do that.

(Which doesn’t imply I’ll never address anything one of those people says…but if I do, it will be because I have something new to say back!)