I did a few small modifications to the blog settings this week. Comments now support Markdown, reply-chains in the comments can go longer, and there are a few more sharing buttons on the posts. I’m gearing up to do a more major revamp of the blog in July for when the name changes over from 4 gravitons and a grad student to just 4 gravitons.

io9 did an article recently on scientific ideas that scientists wish the public would stop misusing. They’ve got a lot of good ones (Proof, Quantum, Organic), but they somehow managed to miss one of the big ones: Energy. Matt Strassler has a nice, precise article on this particular misconception, but nonetheless I think it’s high time I wrote my own.

There’s a whole host of misconceptions regarding energy. Some of them are simple misuses of language, like zero-calorie energy drinks:

Energy can be measured in several different units. You can use Joules, or electron-Volts, or dynes…or calories. Calories are a measure of energy, so zero calories quite literally means zero energy.

Now, that’s not to say the makers of zero calorie energy drinks are lying. They’re just using a different meaning of energy from the scientific one. Their drinks give you vim and vigor, the get-up-and-go required to make money playing computer games. For most of the public, that “get-up-and-go” is called energy, even if scientifically it’s not.

That’s not really a misconception, more of an amusing use of language. This next one though really makes my blood boil.

Raise your hand if you’ve seen a Sci-Fi movie or TV show where some creature is described as being made of “pure energy”. Whether they’re peaceful, ultra-advanced ascended beings, or genocidal maniacs from another dimension, the concept of creatures made of “pure energy” shows up again and again and again.

Even if you aren’t the type to take Sci-Fi technobabble seriously, you’ve probably heard that matter and antimatter annihilate to form energy, or that photons are made out of energy. These sound more reasonable, but they rest on the same fundamental misconception:

Nothing is “made out of energy”.

Rather,

Energy is a property that things have.

Energy isn’t a substance, it isn’t a fluid, it isn’t some kind of nebulous stuff you can make into an indestructible alien body. Things have energy, but nothing is energy.

What about light, then? And what happens when antimatter collides with matter?

Light, just like anything else, has energy. The difference between light and most other things is that light also does not have mass.

In everyday life, we like to think of mass as some sort of basic “stuff”. If things are “made out of mass” or “made out of matter”, and something like light doesn’t have mass, then it must be made out of some other “stuff”, right?

The thing is, mass isn’t really “stuff” any more than energy is. Just like energy, mass is a property that things have. In fact, as I’ve talked about some before, mass is really just a type of energy. Specifically, mass is the energy something has when left alone and at rest. That’s the meaning of Einstein’s famous equation, E equals m c squared: it tells you how to take a known mass and calculate the rest energy that it implies.

In the case of light, all of its energy can be thought of in terms of its (light-speed) motion, so it has no mass. That might tempt you to think of it as being “made of energy”, but really, you and light are not so different.

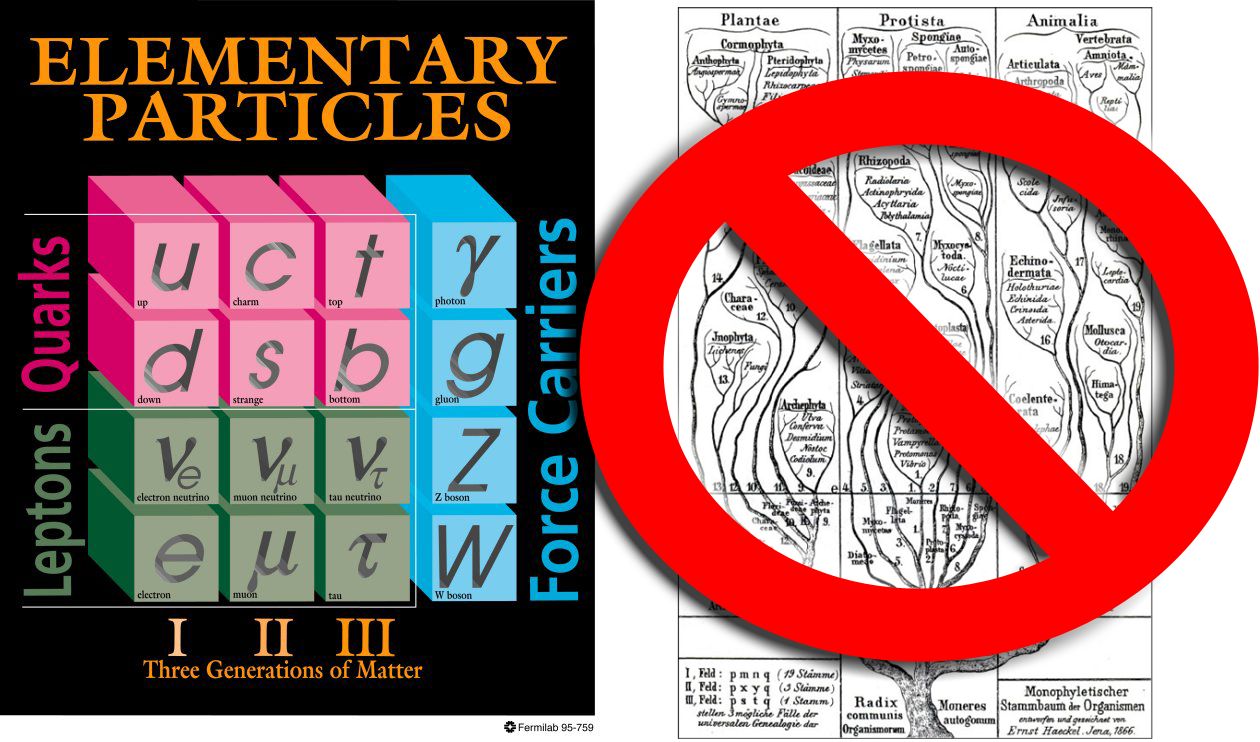

You are made of atoms, and atoms are made of protons, neutrons, and electrons. Let’s consider a proton. A proton’s mass, expressed in the esoteric units physicists favor, is 938 Mega-electron-Volts. That’s how much energy a proton has alone and and rest. A proton is made of three quarks, so you’d think that they would contribute most of its mass. In reality, though, the quarks in protons have masses of only a few Mega-electron-Volts. Most of a proton’s mass doesn’t come from the mass of the quarks.

Quarks interact with each other via the strong nuclear force, the strongest fundamental force in existence. That interaction has a lot of energy, and when viewed from a distance that energy contributes almost all of the proton’s mass. So if light is “made of energy”, so are you.

So why do people say that matter and anti-matter annihilate to make energy?

A matter particle and its anti-matter partner are opposite in a lot of ways. In particular, they have opposite charges: not just electric charge, but other types of charge too.

Charge must be conserved, so if a particle collides with its anti-particle the result has a total charge of zero, as the opposite charges of the two cancel each other out. Light has zero charge, so it’s one of the most common results of a matter-antimatter collision. When people say that matter and antimatter produce “pure energy”, they really just mean that they produce light.

So next time someone says something is “made of energy”, be wary. Chances are, they aren’t talking about something fully scientific.