Let me tell you a secret.

Scattering amplitudes in N=4 super Yang-Mills don’t actually make sense.

Scattering amplitudes calculate the probability that particles “scatter”: coming in from far away, interacting in some fashion, and producing new particles that travel far away in turn. N=4 super Yang-Mills is my favorite theory to work with: a highly symmetric version of the theory that describes the strong nuclear force. In particular, N=4 super Yang-Mills has conformal symmetry: if you re-scale everything larger or smaller, you should end up with the same predictions.

You might already see the contradiction here: scattering amplitudes talk about particles coming in from very far away…but due to conformal symmetry, “far away” doesn’t mean anything, since we can always re-scale it until it’s not far away anymore!

So when I say that I study scattering amplitudes in N=4 super Yang-Mills, am I lying?

Well…yes. But it’s a useful type of lie.

There’s a concept in science writing called “lies to children”, first popularized in a fantasy novel.

This one.

When you explain science to the public, it’s almost always impossible to explain everything accurately. So much background is needed to really understand most of modern science that conveying even a fraction of it would bore the average audience to tears. Instead, you need to simplify, to skip steps, and even (to be honest) to lie.

The important thing to realize here is that “lies to children” aren’t meant to mislead. Rather, they’re chosen in such a way that they give roughly the right impression, even as they leave important details out. When they told you in school that energy is always conserved, that was a lie: energy is a consequence of symmetry in time, and when that symmetry is broken energy doesn’t have to be conserved. But “energy is conserved” is a useful enough rule that lets you understand most of everyday life.

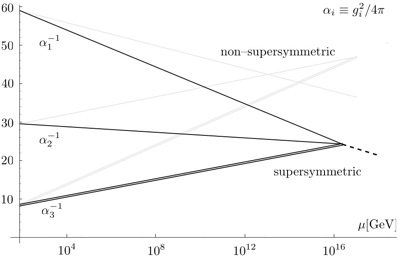

In this case, the “lie” that we’re calculating scattering amplitudes is fairly close to the truth. We’re using the same methods that people use to calculate scattering amplitudes in theories where they do make sense, like QCD. For a while, people thought these scattering amplitudes would have to be zero, since anything else “wouldn’t make sense”…but in practice, we found they were remarkably similar to scattering amplitudes in other theories. Now, we have more rigorous definitions for what we’re calculating that avoid this problem, involving objects called polygonal Wilson loops.

This illustrates another principle, one that hasn’t (yet) been popularized by a fantasy novel. I’d like to call it the “amplitudes are weird” principle. Time and again we amplitudes-folks will do a calculation that doesn’t really make sense, find unexpected structure, and go back to figure out what that structure actually means. It’s been one of the defining traits of the field, and we’ve got a pretty good track record with it.

A couple of weeks back, Lance Dixon gave an interview for the SLAC website, talking about his work on quantum gravity. This was immediately jumped on by Peter Woit and Lubos Motl as ammo for the long-simmering string wars. To one extent or another, both tried to read scientific arguments into the piece. This is in general a mistake: it is in the nature of a popularization piece to contain some volume of lies-to-children, and reading a piece aimed at a lower audience can be just as confusing as reading one aimed at a higher audience.

In the remainder of this post, I’ll try to explain what Lance was talking about in a slightly higher-level way. There will still be lies-to-children involved, this is a popularization blog after all. But I should be able to clear up a few misunderstandings. Lubos probably still won’t agree with the resulting argument, but it isn’t the self-evidently wrong one he seems to think it is.

Lance Dixon has done a lot of work on quantum gravity. Those of you who’ve read my old posts might remember that quantum gravity is not so difficult in principle: general relativity naturally leads you to particles called gravitons, which can be treated just like other particles. The catch is that the theory that you get by doing this fails to be predictive: one reason why is that you get an infinite number of erroneous infinite results, which have to be papered over with an infinite number of arbitrary constants.

Working with these non-predictive theories, however, can still yield interesting results. In the article, Lance mentions the work of Bern, Carrasco, and Johansson. BCJ (as they are abbreviated) have found that calculating a gravity amplitude often just amounts to calculating a (much easier to find) Yang-Mills amplitude, and then squaring the right parts. This was originally found in the context of string theory by another three-letter group, Kawai, Lewellen, and Tye (or KLT). In string theory, it’s particularly easy to see how this works, as it’s a basic feature of how string theory represents gravity. However, the string theory relations don’t tell the whole story: in particular, they only show that this squaring procedure makes sense on a classical level. Once quantum corrections come in, there’s no known reason why this squaring trick should continue to work in non-string theories, and yet so far it has. It would be great if we had a good argument why this trick should continue to work, a proof based on string theory or otherwise: for one, it would allow us to be much more confident that our hard work trying to apply this trick will pay off! But at the moment, this falls solidly under the “amplitudes are weird” principle.

Using this trick, BCJ and collaborators (frequently including Lance Dixon) have been calculating amplitudes in N=8 supergravity, a highly symmetric version of those naive, non-predictive gravity theories. For this particular, theory, the theory you “square” for the above trick is N=4 super Yang-Mills. N=4 super Yang-Mills is special for a number of reasons, but one is that the sorts of infinite results that lose you predictive power in most other quantum field theories never come up. Remarkably, the same appears to be true of N=8 supergravity. We’re still not sure, the relevant calculation is still a bit beyond what we’re capable of. But in example after example, N=8 supergravity seems to be behaving similarly to N=4 super Yang-Mills, and not like people would have predicted from its gravitational nature. Once again, amplitudes are weird, in a way that string theory helped us discover but by no means conclusively predicted.

If N=8 supergravity doesn’t lose predictive power in this way, does that mean it could describe our world?

In a word, no. I’m not claiming that, and Lance isn’t claiming that. N=8 supergravity simply doesn’t have the right sorts of freedom to give you something like the real world, no matter how you twist it. You need a broader toolset (string theory generally) to get something realistic. The reason why we’re interested in N=8 supergravity is not because it’s a candidate for a real-world theory of quantum gravity. Rather, it’s because it tells us something about where the sorts of dangerous infinities that appear in quantum gravity theories really come from.

That’s what’s going on in the more recent paper that Lance mentioned. There, they’re not working with a supersymmetric theory, but with the naive theory you’d get from just trying to do quantum gravity based on Einstein’s equations. What they found was that the infinity you get is in a certain sense arbitrary. You can’t get rid of it, but you can shift it around (infinity times some adjustable constant 😉 ) by changing the theory in ways that aren’t physically meaningful. What this suggests is that, in a sense that hadn’t been previously appreciated, the infinite results naive gravity theories give you are arbitrary.

The inevitable question, though, is why would anyone muck around with this sort of thing when they could just use string theory? String theory never has any of these extra infinities, that’s one of its most important selling points. If we already have a perfectly good theory of quantum gravity, why mess with wrong ones?

Here, Lance’s answer dips into lies-to-children territory. In particular, Lance brings up the landscape problem: the fact that there are 10^500 configurations of string theory that might loosely resemble our world, and no clear way to sift through them to make predictions about the one we actually live in.

This is a real problem, but I wouldn’t think of it as the primary motivation here. Rather, it gets at a story people have heard before while giving the feeling of a broader issue: that string theory feels excessive.

Why does this have a Wikipedia article?

Think of string theory like an enormous piece of fabric, and quantum gravity like a dress. You can definitely wrap that fabric around, pin it in the right places, and get a dress. You can in fact get any number of dresses, elaborate trains and frilly togas and all sorts of things. You have to do something with the extra material, though, find some tricky but not impossible stitching that keeps it out of the way, and you have a fair number of choices of how to do this.

From this perspective, naive quantum gravity theories are things that don’t qualify as dresses at all, scarves and socks and so forth. You can try stretching them, but it’s going to be pretty obvious you’re not really wearing a dress.

What we amplitudes-folks are looking for is more like a pencil skirt. We’re trying to figure out the minimal theory that covers the divergences, the minimal dress that preserves modesty. It would be a dress that fits the form underneath it, so we need to understand that form: the infinities that quantum gravity “wants” to give rise to, and what it takes to cancel them out. A pencil skirt is still inconvenient, it’s hard to sit down for example, something that can be solved by adding extra material that allows it to bend more. Similarly, fixing these infinities is unlikely to be the full story, there are things called non-perturbative effects that probably won’t be cured. But finding the minimal pencil skirt is still going to tell us something that just pinning a vast stretch of fabric wouldn’t.

This is where “amplitudes are weird” comes in in full force. We’ve observed, repeatedly, that amplitudes in gravity theories have unexpected properties, traits that still aren’t straightforwardly explicable from the perspective of string theory. In our line of work, that’s usually a sign that we’re on the right track. If you’re a fan of the amplituhedron, the project here is along very similar lines: both are taking the results of plodding, not especially deep loop-by-loop calculations, observing novel simplifications, and asking the inevitable question: what does this mean?

That far-term perspective, looking off into the distance at possible insights about space and time, isn’t my style. (It isn’t usually Lance’s either.) But for the times that you want to tell that kind of story…well, this isn’t that outlandish of a story to tell. And unless your primary concern is whether a piece gives succor to the Woits of the world, it shouldn’t be an objectionable one.