So suppose you want to argue that, contrary to appearances, the universe isn’t impossible, and you want to use anthropic reasoning to do it. Suppose further that you read my post last week, so you know what anthropic reasoning is. In case you haven’t, anthropic reasoning means recognizing that, while it may be unlikely that the location/planet/solar system/universe you’re in is a nice place for you to live, as long as there is at least one nice place to live you will almost certainly find yourself living there. Applying this to the universe as a whole requires there to be many additional universes, making up a multiverse, at least one of which is a nice place for human life.

Is there actually a multiverse, though? How would that even work?

One of the more plausible proposals for a multiverse is the concept of eternal inflation.

Eternal inflation is idea with many variants (such as chaotic inflation), and rather than give the details of any particular variant, I want to describe the setup in as broad strokes as possible.

The first thing to be aware of is that the universe is expanding, and has been since the Big Bang. Counter-intuitively, this doesn’t mean that the universe was once small, and is now bigger: in all likelihood, the universe was always infinite in size. Instead, it means that things began packed in close together, and have since moved further apart. While various forces (gravity, electromagnetism) hold things together on short scales, the wide open spaces between galaxies are constantly widening, spreading out the map of the universe.

You would expect this process to slow down over time. While it might have started with a burst of energy (aforementioned Big Bang), as the universe gets more and more spread out it should be running out of steam. The thing is, it’s not. The evidence (complicated enough that I’m not going to go into it now) shows that the universe actually sped up dramatically shortly after the Big Bang, and seems to be speeding up again now. This speeding up is called inflation.

So what could make the universe speed up? You might have heard of Einstein’s cosmological constant, a constant added to Einstein’s equations of general relativity that, while originally intended to make the universe stay in a steady state forever, can also be chosen so as to speed up the universe’s expansion. While that works mathematically, it’s not really an explanation, especially if it changes with time.

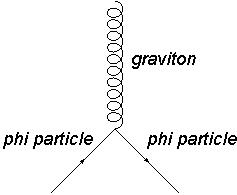

Enter scalar fields. A scalar is what happens when you let what looks like a constant of nature vary as a quantum field. Scalar fields can vary over space, and they can change over time, making them ideal candidates for explaining inflation. And as a quantum field, the scalar field behind inflation (often called the inflaton) should randomly fluctuate, giving rise to the occasional particle just like the Higgs (another scalar field) does.

Well, not just like the Higgs. See, the Higgs controls mass, and if the mass of some particles increases a bit in a tiny area, it’s weird, but it’s not going to spread. On the other hand, if space in some place is inflating faster than space in another place…

Suppose you have two empty blocks in the middle of intergalactic space, each a cube one foot on each side, with one inflating faster than the other. Twice as fast, let’s say, so that when one cube grows to two feet on a side, the other grows to four feet on a side. Then when the first cube is four feet on a side, the other will be sixteen. When the first has eight foot sides, the other’s will be sixty-four. And so forth. Even a small difference in expansion rates quickly leads to one region dominating the other. And if inflation stops slightly later in one region than in another, that can be a pretty dramatic difference too.

The end result is that if inflation were this sort of scalar field, the universe would just keep expanding forever, faster and faster. Only small pockets would slow down enough that anything could actually stick together. So while most of the universe would just tear itself apart forever, some of it, the parts that tear themselves apart slowly, can contain atoms and stars and well, life. A universe like that is one that is experiencing eternal inflation. It’s eternal because it doesn’t have a beginning or end: what looks to us like the Big Bang, the beginning of our universe, is really just the point at which our part of the universe started expanding slow enough that anything we recognize as matter could exist.

There’s no reason for us to be the only bubble that slowed down, though, and that’s where the multiverse aspect comes in. In eternal inflation there are lots and lots of slow regions, each one like a mini-universe in its own right. What’s more, each region can have totally different constants of nature.

To understand how that works, remember that each region has a different rate of inflation, and thus a different value for the inflaton scalar field. It turns out that many types of scalar fields like to interact with each other. If you recall my post on scalar fields (already linked, not gonna link it again), you’ll remember that for everything that looks like a constant of nature, chances are there’s a scalar field that controls it. So different values for inflation means different values for all of those scalar fields too, which means different physical constants. With so many (possibly infinitely many) regions with different physical constants, there’s bound to be one where we could live.

Now, before you get excited here, there are a few caveats. Well, a lot of caveats.

First, it’s all well and good if the multiverse can produce life, but what if it produces dramatically different life? What sort of life is eternal inflation most likely to produce, and what are the chances it would look at all like us? For that matter, how do you figure out the chances of anything in an infinite, eternally expanding universe? This last is a very difficult problem, and work on it is ongoing.

Beyond that, we don’t even know enough about inflation to know whether eternal inflation would happen or not. We’ve got a pretty good idea that inflation involves scalar fields, but how many and in what combination? We don’t know yet, and the evidence is still coming in. We’re right on the cutting edge of things now, and until we know more it’s tough to say for certain whether any of this is viable. Still, it’s fun to think about.

, pi is particularly interesting in that you cannot write an algebra equation made up of whole numbers whose solution is pi. While you can easily get fractions (

gives

) and even many irrational numbers (

gives

), pi is one of a set of numbers that it is impossible to get. These special numbers transcend other numbers, in that you cannot use more everyday numbers to get to them, and as such mathematicians call them transcendental numbers.

, to find

) have transcendental weight three. And so on.

, it hasn’t even been proven that the number is actually transcendental at all! However, physicists still use the concept of transcendental weight because it allows us to classify and manipulate a common and useful set of functions. This is an example of the differences in methods and standards between physicists and mathematicians, even when they are working on similar things.