Why did physicists expect to see something new at the LHC, more than just the Higgs boson? Mostly, because of something called naturalness.

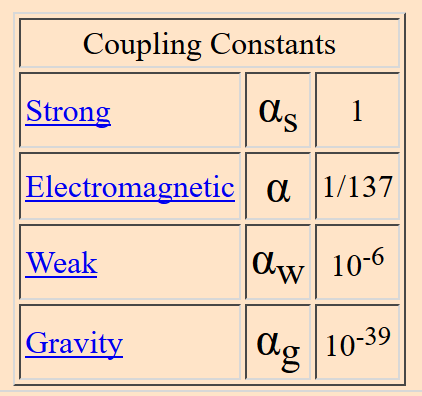

Naturalness, broadly speaking, is the idea that there shouldn’t be coincidences in physics. If two numbers that appear in your theory cancel out almost perfectly, there should be a reason that they cancel. Put another way, if your theory has a dimensionless constant in it, that constant should be close to one.

(To see why these two concepts are the same, think about a theory where two large numbers miraculously almost cancel, leaving just a small difference. Take the ratio of one of those large numbers to the difference, and you get a very large dimensionless number.)

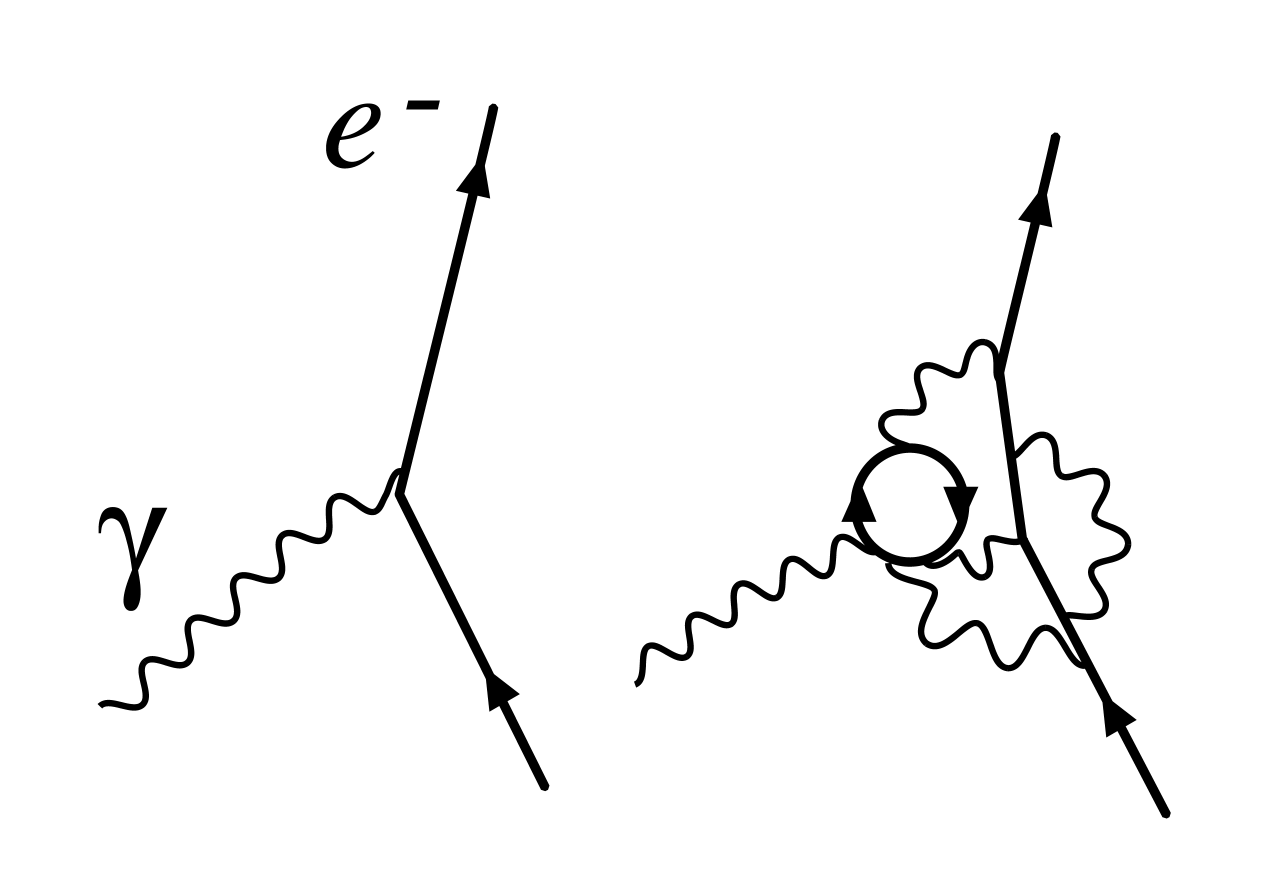

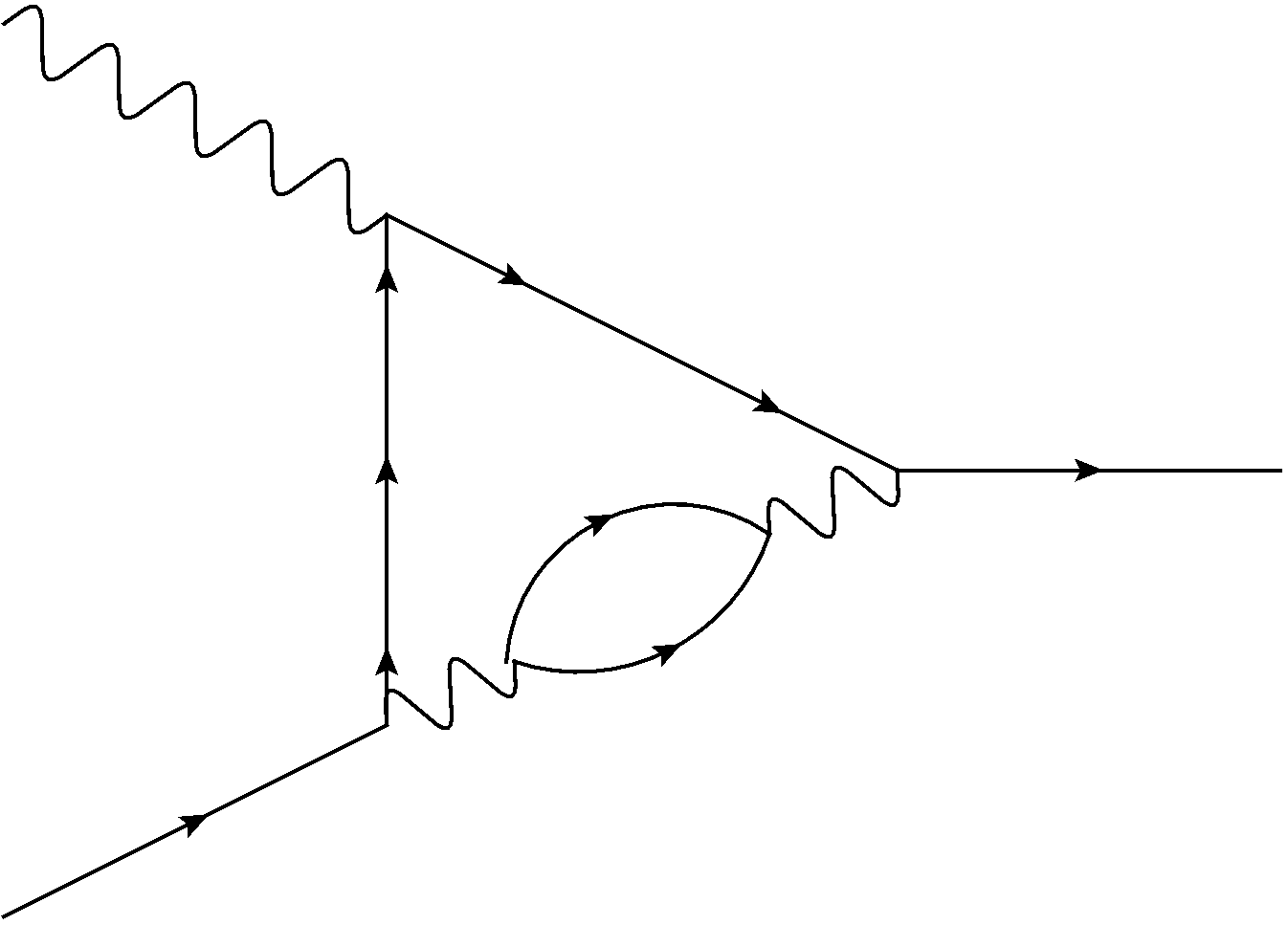

You might have heard it said that the mass of the Higgs boson is “unnatural”. There are many different physical processes that affect what we measure as the mass of the Higgs. We don’t know exactly how big these effects are, but we do know that they grow with the scale of “new physics” (aka the mass of any new particles we might have discovered), and that they have to cancel to give the Higgs mass we observe. If we don’t see any new particles, the Higgs mass starts looking more and more unnatural, driving some physicists to the idea of a “multiverse”.

If you find parts of this argument hokey, you’re not alone. Critics of naturalness point out that we don’t really have a good reason to favor “numbers close to one”, nor do we have any way to quantify how “bad” a number far from one is (we don’t know the probability distribution, in other words). They critique theories that do preserve naturalness, like supersymmetry, for being increasingly complicated and unwieldy, violating Occam’s razor. And in some cases they act baffled by the assumption that there should be any “new physics” at all.

Some of these criticisms are reasonable, but some are distracting and off the mark. The problem is that the popular argument for naturalness leaves out some important assumptions. These assumptions are usually kept in mind by the people arguing for naturalness (at least the more careful people), but aren’t often made explicit. I’d like to state some of these assumptions. I’ll be framing the naturalness argument in a bit of an unusual (if not unprecedented) way. My goal is to show that some criticisms of naturalness don’t really work, while others still make sense.

I’d like to state the naturalness argument as follows:

- The universe should be ultimately described by a theory with no free dimensionless parameters at all. (For the experts: the theory should also be UV-finite.)

- We are reasonably familiar with theories of the sort described in 1., we know roughly what they can look like.

- If we look at such a theory at low energies, it will appear to have dimensionless parameters again, based on the energy where we “cut off” our description. We understand this process well enough to know what kinds of values these parameters can take, starting from 2.

- Point 3. can only be consistent with the observed mass of the Higgs if there is some “new physics” at around the scales the LHC can measure. That is, there is no known way to start with a theory like those of 2. and get the observed Higgs mass without new particles.

Point 1. is often not explicitly stated. It’s an assumption, one that sits in the back of a lot of physicists’ minds and guides their reasoning. I’m really not sure if I can fully justify it, it seems like it should be a consequence of what a final theory is.

(For the experts: you’re probably wondering why I’m insisting on a theory with no free parameters, when usually this argument just demands UV-finiteness. I demand this here because I think this is the core reason why we worry about coincidences: free parameters of any intermediate theory must eventually be explained in a theory where those parameters are fixed, and “unnatural” coincidences are those we don’t expect to be able to fix in this way.)

Point 2. may sound like a stretch, but it’s less of one than you might think. We do know of a number of theories that have few or no dimensionless parameters (and that are UV-finite), they just don’t describe the real world. Treating these theories as toy models, we can hopefully get some idea of how theories like this should look. We also have a candidate theory of this kind that could potentially describe the real world, M theory, but it’s not fleshed out enough to answer these kinds of questions definitively at this point. At best it’s another source of toy models.

Point 3. is where most of the technical arguments show up. If someone talking about naturalness starts talking about effective field theory and the renormalization group, they’re probably hashing out the details of point 3. Parts of this point are quite solid, but once again there are some assumptions that go into it, and I don’t think we can say that this point is entirely certain.

Once you’ve accepted the arguments behind points 1.-3., point 4. follows. The Higgs is unnatural, and you end up expecting new physics.

Framed in this way, arguments about the probability distribution of parameters are missing the point, as are arguments from Occam’s razor.

The point is not that the Standard Model has unlikely parameters, or that some in-between theory has unlikely parameters. The point is that there is no known way to start with the kind of theory that could be an ultimate description of the universe and end up with something like the observed Higgs and no detectable new physics. Such a theory isn’t merely unlikely, if you take this argument seriously it’s impossible. If your theory gets around this argument, it can be as cumbersome and Occam’s razor-violating as it wants, it’s still a better shot than no possible theory at all.

In general, the smarter critics of naturalness are aware of this kind of argument, and don’t just talk probabilities. Instead, they reject some combination of point 2. and point 3.

This is more reasonable, because point 2. and point 3. are, on some level, arguments from ignorance. We don’t know of a theory with no dimensionless parameters that can give something like the Higgs with no detectable new physics, but maybe we’re just not trying hard enough. Given how murky our understanding of M theory is, maybe we just don’t know enough to make this kind of argument yet, and the whole thing is premature. This is where probability can sneak back in, not as some sort of probability distribution on the parameters of physics but just as an estimate of our own ability to come up with new theories. We have to guess what kinds of theories can make sense, and we may well just not know enough to make that guess.

One thing I’d like to know is how many critics of naturalness reject point 1. Because point 1. isn’t usually stated explicitly, it isn’t often responded to explicitly either. The way some critics of naturalness talk makes me suspect that they reject point 1., that they honestly believe that the final theory might simply have some unexplained dimensionless numbers in it that we can only fix through measurement. I’m curious whether they actually think this, or whether I’m misreading them.

There’s a general point to be made here about framing. Suppose that tomorrow someone figures out a way to start with a theory with no dimensionless parameters and plausibly end up with a theory that describes our world, matching all existing experiments. (People have certainly been trying.) Does this mean naturalness was never a problem after all? Or does that mean that this person solved the naturalness problem?

Those sound like very different statements, but it should be clear at this point that they’re not. In principle, nothing distinguishes them. In practice, people will probably frame the result one way or another based on how interesting the solution is.

If it turns out we were missing something obvious, or if we were extremely premature in our argument, then in some sense naturalness was never a real problem. But if we were missing something subtle, something deep that teaches us something important about the world, then it should be fair to describe it as a real solution to a real problem, to cite “solving naturalness” as one of the advantages of the new theory.

If you ask for my opinion? You probably shouldn’t, I’m quite far from an expert in this corner of physics, not being a phenomenologist. But if you insist on asking anyway, I suspect there probably is something wrong with the naturalness argument. That said, I expect that whatever we’re missing, it will be something subtle and interesting, that naturalness is a real problem that needs to really be solved.