No, they haven’t, and no, that’s not what they found, and no, that doesn’t make sense.

No, they haven’t, and no, that’s not what they found, and no, that doesn’t make sense.

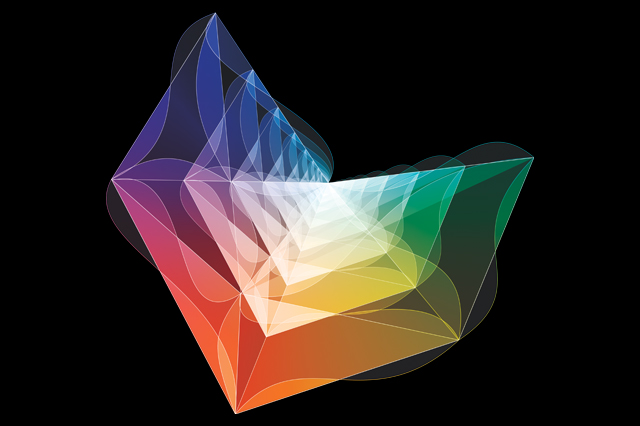

Quantum field theory is how we understand particle physics. Each fundamental particle comes from a quantum field, a law of nature in its own right extending across space and time. That’s why it’s so momentous when we detect a fundamental particle, like the Higgs, for the first time, why it’s not just like discovering a new species of plant.

That’s not the only thing quantum field theory is used for, though. Quantum field theory is also enormously important in condensed matter and solid state physics, the study of properties of materials.

When studying materials, you generally don’t want to start with fundamental particles. Instead, you usually want to think about overall properties, ways the whole material can move and change overall. If you want to understand the quantum properties of these changes, you end up describing them the same way particle physicists talk about fundamental fields: you use quantum field theory.

In particle physics, particles come from vibrations in fields. In condensed matter, your fields are general properties of the material, but they can also vibrate, and these vibrations give rise to quasiparticles.

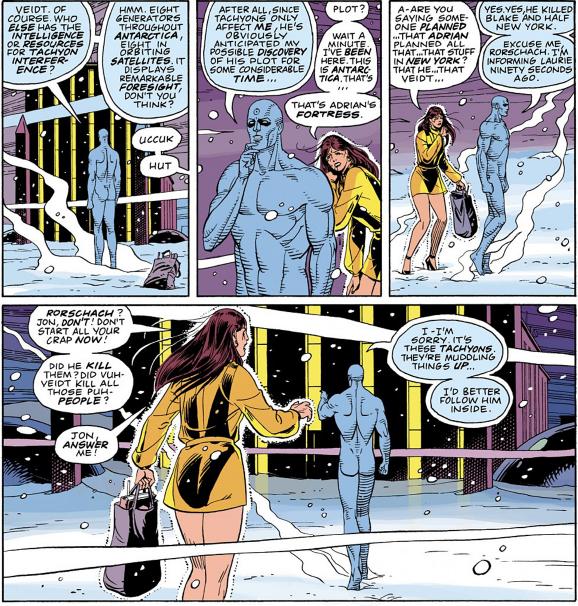

Probably the simplest examples of quasiparticles are the “holes” in semiconductors. Semiconductors are materials used to make transistors. They can be “doped” with extra slots for electrons. Electrons in the semiconductor will move around from slot to slot. When an electron moves, though, you can just as easily think about it as a “hole”, an empty slot, that “moved” backwards. As it turns out, thinking about electrons and holes independently makes understanding semiconductors a lot easier, and the same applies to other types of quasiparticles in other materials.

Unfortunately, the article I linked above is pretty impressively terrible, and communicates precisely none of that.

The problem starts in the headline:

Scientists have finally discovered massless particles, and they could revolutionise electronics

Scientists have finally discovered massless particles, eh? So we haven’t seen any massless particles before? You can’t think of even one?

After 85 years of searching, researchers have confirmed the existence of a massless particle called the Weyl fermion for the first time ever. With the unique ability to behave as both matter and anti-matter inside a crystal, this strange particle can create electrons that have no mass.

Ah, so it’s a massless fermion, I see. Well indeed, there are no known fundamental massless fermions, not since we discovered neutrinos have mass anyway. The statement that these things “create electrons” of any sort is utter nonsense, however, let alone that they create electrons that themselves have no mass.

Electrons are the backbone of today’s electronics, and while they carry charge pretty well, they also have the tendency to bounce into each other and scatter, losing energy and producing heat. But back in 1929, a German physicist called Hermann Weyl theorised that a massless fermion must exist, that could carry charge far more efficiently than regular electrons.

Ok, no. Just no.

The problem here is that this particular journalist doesn’t understand the difference between pure theory and phenomenology. Weyl didn’t theorize that a massless fermion “must exist”, nor did he say anything about their ability to carry charge. Weyl described, mathematically, how a massless fermion could behave. Weyl fermions aren’t some proposed new fundamental particle, like the Higgs boson: they’re a general type of particle. For a while, people thought that neutrinos were Weyl fermions, before it was discovered that they had mass. What we’re seeing here isn’t some ultimate experimental vindication of Weyl, it’s just an old mathematical structure that’s been duplicated in a new material.

What’s particularly cool about the discovery is that the researchers found the Weyl fermion in a synthetic crystal in the lab, unlike most other particle discoveries, such as the famous Higgs boson, which are only observed in the aftermath of particle collisions. This means that the research is easily reproducible, and scientists will be able to immediately begin figuring out how to use the Weyl fermion in electronics.

Arrgh!

Fundamental particles from particle physics, like the Higgs boson, and quasiparticles, like this particular Weyl fermion, are completely different things! Comparing them like this, as if this is some new efficient trick that could have been used to discover the Higgs, just needlessly confuses people.

Weyl fermions are what’s known as quasiparticles, which means they can only exist in a solid such as a crystal, and not as standalone particles. But further research will help scientists work out just how useful they could be. “The physics of the Weyl fermion are so strange, there could be many things that arise from this particle that we’re just not capable of imagining now,” said Hasan.

In the very last paragraph, the author finally mentions quasiparticles. There’s no mention of the fact that they’re more like waves in the material than like fundamental particles, though. From this description, it makes it sound like they’re just particles that happen to chill inside crystals, like they’re agoraphobic or something.

What the scientists involved here actually discovered is probably quite interesting. They’ve discovered a new sort of ripple in the material they studied. The ripple can carry charge, and because it can behave like a massless particle it can carry charge much faster than electrons can. (To get a basic idea as to how this works, think about waves in the ocean. You can have a wave that goes much faster than the ocean’s current. As the wave travels, no actual water molecules travel from one side to the other. Instead, it is the motion that travels, the energy pushing the wave up and down being transferred along.)

There’s no reason to compare this to particle physics, to make it sound like another Higgs boson. This sort of thing dilutes the excitement of actual particle discoveries, perpetuating the misconception of particles as just more species to find and catalog. Furthermore, it’s just completely unnecessary: condensed matter is a very exciting field, one that the majority of physicists work on. It doesn’t need to ride on the coat-tails of particle physics rhetoric in order to capture peoples’ attention. I’ve seen journalists do this kind of thing before, comparing new quasiparticles and composite particles with fundamental particles like the Higgs, and every time I cringe. Don’t you have any respect for the subject you’re writing about?