I’ve talked before about supersymmetry. Supersymmetry relates particles with different spins, linking spin 1 force-carrying particles like photons and gluons to spin 1/2 particles similar to electrons, and spin 1/2 particles in turn to spin 0 “scalar” particles, the same general type as the Higgs. I emphasized there that, if two particles are related by supersymmetry, they will have some important traits in common: the same mass and the same interactions.

That’s true for the theories I like to work with. In particular, it’s true for N=4 super Yang-Mills. Adding supersymmetry allows us to tinker with neater, cleaner theories, gaining mastery over rice before we start experimenting with the more intricate “sushi” of theories of the real world.

However, it should be pretty clear that we don’t live in a world with this sort of supersymmetry. A quick look at the Standard Model indicates that no two known particles interact in precisely the same way. When people try to test supersymmetry in the real world, they’re not looking for this sort of thing. Rather, they’re looking for broken supersymmetry.

In the past, I’ve described broken supersymmetry as like a broken mirror: the two sides are no longer the same, but you can still predict one side’s behavior from the other. When supersymmetry is broken, related particles still have the same interactions. Now, though, they can have different masses.

The simplest version of supersymmetry, N=1, gives one partner to each particle. Since nothing in the Standard Model can be partners of each other, if we have broken N=1 supersymmetry in the real world then we need a new particle for each existing one…and each one of those particles has a potentially unknown, different mass. And if that sounds rather complicated…

Baroque enough to make Rubens happy.

That, right there, is the Minimal Supersymmetric Standard Model, the simplest thing you can propose if you want a world with broken supersymmetry. If you look carefully, you’ll notice that it’s actually a bit more complicated than just one partner for each known particle: there are a few extra Higgs fields as well!

If we’re hoping to explain anything in a simpler way, we seem to have royally screwed up. Luckily, though, the situation is not quite as ridiculous as it appears. Let’s go back to the mirror analogy.

If you look into a broken mirror, you can still have a pretty good idea of what you’ll see…but in order to do so, you have to know how the mirror is broken.

Similarly, supersymmetry can be broken in different ways, by different supersymmetry-breaking mechanisms.

The general idea is to start with a theory in which supersymmetry is precisely true, and all supersymmetric partners have the same mass. Then, consider some Higgs-like field. Like the Higgs, it can take some constant value throughout all of space, forming a background like the color of a piece of construction paper. While the rules that govern this field would respect supersymmetry, any specific value it takes wouldn’t. Instead, it would be biased: the spin 0, Higgs-like field could take on a constant value, but its spin 1/2 supersymmetric partner couldn’t. (If you want to know why, read my post on the Higgs linked above.)

Once that field takes on a specific value, supersymmetry is broken. That breaking then has to be communicated to the rest of the theory, via interactions between different particles. There are several different ways this can work: perhaps the interactions come from gravity, or are the same strength as gravity. Maybe instead they come from a new fundamental force, similar to the strong nuclear force but harder to discover. They could even come as byproducts of the breaking of other symmetries.

Each one of these options has different consequences, and leads to different predictions for the masses of undiscovered partner particles. They tend to have different numbers of extra parameters (for example, if gravity-based interactions are involved there are four new parameters, and an extra sign, that must be fixed). None of them have an entire standard model-worth of new parameters…but all of them have at least a few extra.

(Brief aside: I’ve been talking about the Minimal Supersymmetric Standard Model, but these days people have largely given up on finding evidence for it, and are exploring even more complicated setups like the Next-to-Minimal Supersymmetric Standard Model.)

If we’re introducing extra parameters without explaining existing ones, what’s the point of supersymmetry?

Last week, I talked about the problem of fine-tuning. I explained that when physicists are worried about fine-tuning, what we’re really worried about is whether the sorts of ultimate (low number of parameters) theories that we expect to hold could give rise to the apparently fine-tuned world we live in. In that post, I was a little misleading about supersymmetry’s role in that problem.

The goal of introducing (broken) supersymmetry is to solve a particular set of fine-tuning problems, mostly one specific one involving the Higgs. This doesn’t mean that supersymmetry is the sort of “ultimate” theory we’re looking for, rather supersymmetry is one of the few ways we know to bridge the gap between “ultimate” theories and a fine-tuned real world.

To explain it in terms of the language of the last post, it’s hard to find one of these “ultimate” theories that gives rise to a fine-tuned world. What’s quite a bit easier, though, is finding one of these “ultimate” theories that gives rise to a supersymmetric world, which in turn gives rise to a fine-tuned real world.

In practice, these are the sorts of theories that get tested. Very rarely are people able to propose testable versions of the more “ultimate” theories. Instead, one generally finds intermediate theories, theories that can potentially come from “ultimate” theories, and builds general versions of those that can be tested.

These intermediate theories come in multiple levels. Some physicists look for the most general version, theories like the Minimal Supersymmetric Standard Model with a whole host of new parameters. Others look for more specific versions, choices of supersymmetry-breaking mechanisms. Still others try to tie it further up, getting close to candidate “ultimate” theories like M theory (though in practice they generally make a few choices that put them somewhere in between).

The hope is that with a lot of people covering different angles, we’ll be able to make the best use of any new evidence that comes in. If “something” is out there, there are still a lot of choices for what that something could be, and it’s the job of physicists to try to understand whatever ends up being found.

Not bad for working in a broken world, huh?

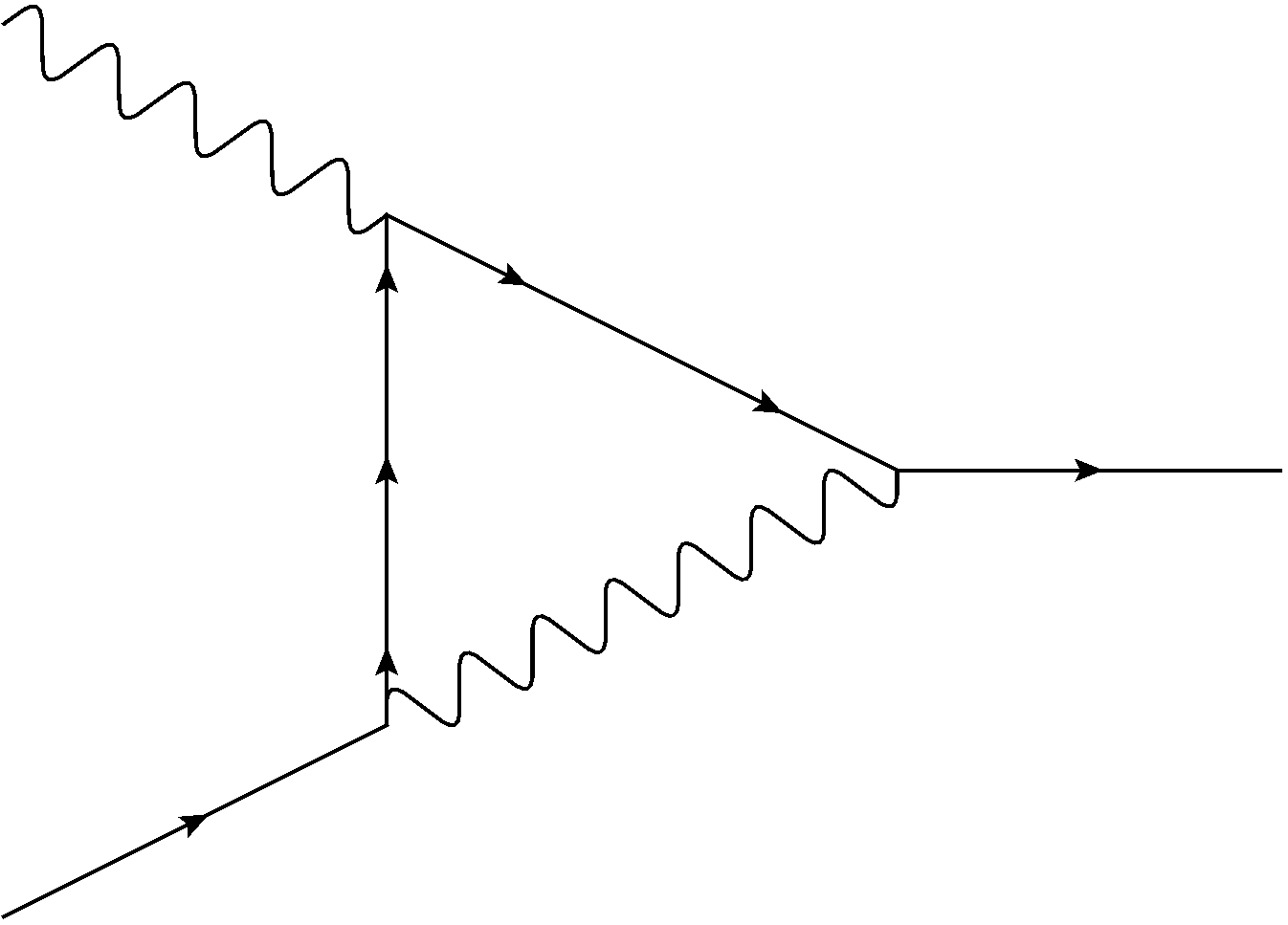

, relates a particle’s mass to its “rest energy”, the energy it would have if it stopped moving around and sit still. Even when a particle seems to be sitting still from the outside, there’s still a lot going on, though. “Composite” particles like protons have powerful forces between their internal quarks, while particles like electrons interact with the Higgs field. These processes give the particle energy, even when it’s not moving, so from our perspective on the outside they’re giving the particle mass.