Light always moves at the speed of light.

It’s not alone in this: anything that lacks mass moves at the speed of light. Gluons, if they weren’t constantly interacting with each other, would move at the speed of light. Neutrinos, back when we thought they were massless, were thought to move at the speed of light. Gravitational waves, and by extension gravitons, move at the speed of light.

This is, on the face of it, a weird thing to say. If I say a jet moves at the speed of sound, I don’t mean that it always moves at the speed of sound. Find it in its hangar and hopefully it won’t be moving at all.

And so, people occasionally ask me, why can’t we find light in its hangar? Why does light never stand still? What makes light move?

(For the record, you can make light “stand still” in a material, but that’s because the material is absorbing and reflecting it, so it’s not the “same” light traveling through. Compare the speed of a wave of hands in a stadium versus the speed you could run past the seats.)

This is surprisingly tricky to explain without math. Some people point out that if you want to see light at rest you need to speed up to catch it, but you can’t accelerate enough unless you too are massless. This probably sounds a bit circular. Some people talk about how, from light’s perspective, no time passes at all. This is true, but it seems to confuse more than it helps. Some people say that light is “made of energy”, but I don’t like that metaphor. Nothing is “made of energy”, nor is anything “made of mass” either. Mass and energy are properties things can have.

I do like game metaphors though. So, imagine that each particle (including photons, particles of light) is a character in an RPG.

You can think of energy as the particle’s “character points”. When the particle builds its character it gets a number of points determined by its energy. It can spend those points increasing its “stats”: mass and momentum, via the lesser-known big brother of ,

.

Maybe the particle chooses to play something heavy, like a Higgs boson. Then they spend a lot of points on mass, and don’t have as much to spend on momentum. If they picked something lighter, like an electron, they’d have more to spend, so they could go faster. And if they spent nothing at all on mass, like light does, they could use all of their energy “points” boosting their speed.

Now, it turns out that these “energy points” don’t boost speed one for one, which is why low-energy light isn’t any slower than high-energy light. Instead, speed is determined by the ratio between energy and momentum. When they’re proportional to each other, when , then a particle is moving at the speed of light.

(Why this is is trickier to explain. You’ll have to trust me or wikipedia that the math works out.)

Some of you may be happy with this explanation, but others will accuse me of passing the buck. Ok, a photon with any energy will move at the speed of light. But why do photons have any energy at all? And even if they must move at the speed of light, what determines which direction?

Here I think part of the problem is an old physics metaphor, probably dating back to Newton, of a pool table.

A pool table is a decent metaphor for classical physics. You have moving objects following predictable paths, colliding off each other and the walls of the table.

Where people go wrong is in projecting this metaphor back to the beginning of the game. At the beginning of a game of pool, the balls are at rest, racked in the center. Then one of them is hit with the pool cue, and they’re set into motion.

In physics, we don’t tend to have such neat and tidy starting conditions. In particular, things don’t have to start at rest before something whacks them into motion.

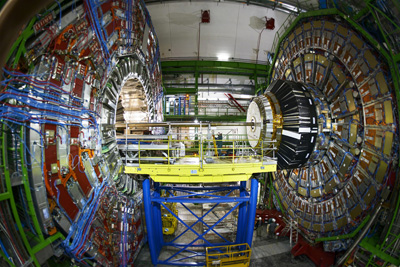

A photon’s “start” might come from an unstable Higgs boson produced by the LHC. The Higgs decays, and turns into two photons. Since energy is conserved, these two each must have half of the energy of the original Higgs, including the energy that was “spent” on its mass. This process is quantum mechanical, and with no preferred direction the photons will emerge in a random one.

Photons in the LHC may seem like an artificial example, but in general whenever light is produced it’s due to particles interacting, and conservation of energy and momentum will send the light off in one direction or another.

(For the experts, there is of course the possibility of very low energy soft photons, but that’s a story for another day.)

Not even the beginning of the universe resembles that racked set of billiard balls. The question of what “initial conditions” make sense for the whole universe is a tricky one, but there isn’t a way to set it up where you start with light at rest. It’s not just that it’s not the default option: it isn’t even an available option.

Light moves at the speed of light, no matter what. That isn’t because light started at rest, and something pushed it. It’s because light has energy, and a particle has to spend its “character points” on something.