This week I’ve been busy, attending a workshop here at Perimeter on Superstring Perturbation Theory.

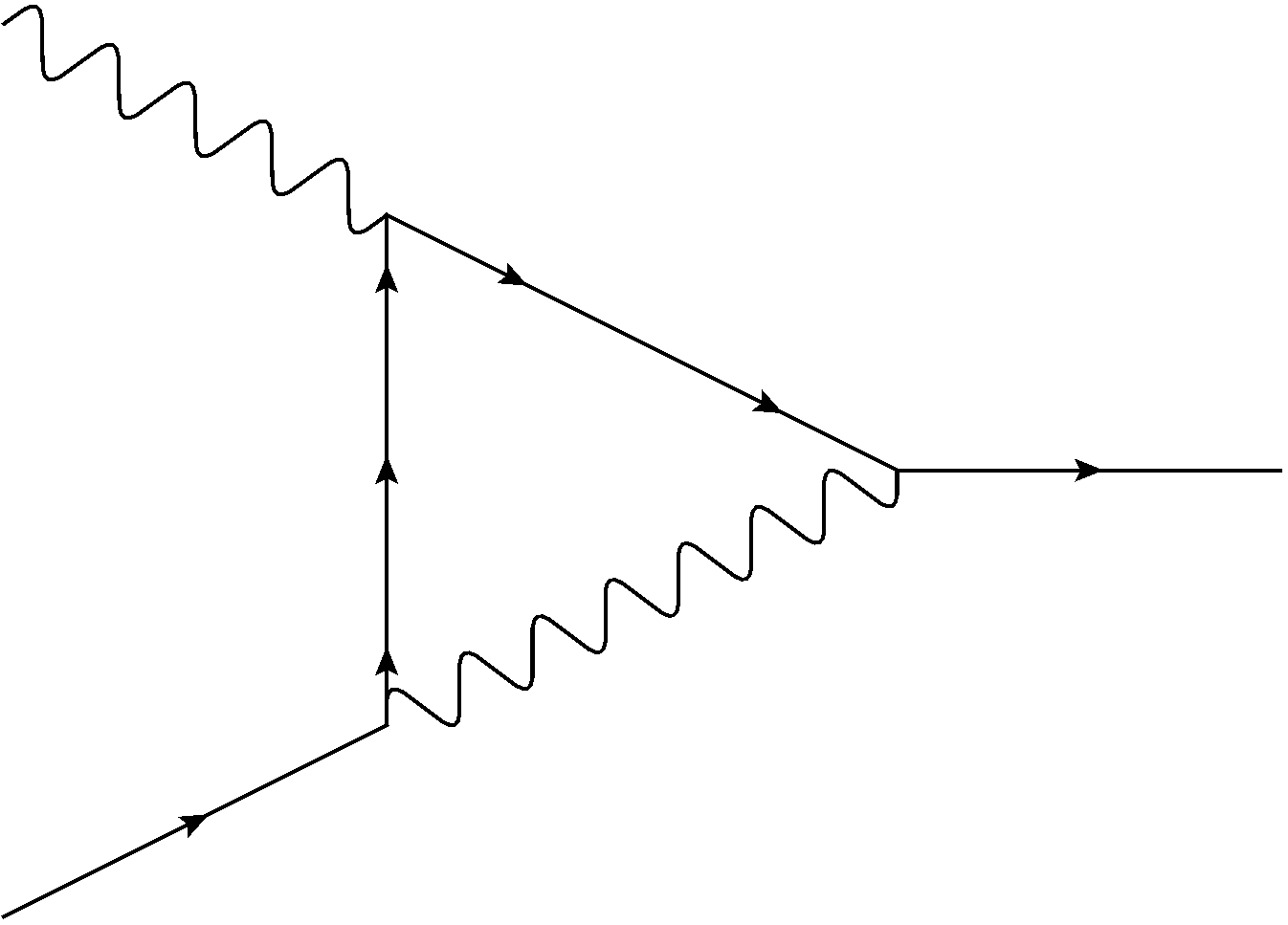

Superstrings are the supersymmetric strings that string theorists use to describe fundamental particles, while perturbation theory is the trick, common in almost every area of physics, of solving a problem by a series of increasingly precise approximations.

Based on that description, you’d think that superstring perturbation theory would be a central topic in string theory research. You wouldn’t expect it to be the sort of thing only a few people at the top of the field dabble in. You definitely wouldn’t expect one of the speakers at the workshop to mention that this might be the first conference on superstring perturbation theory he’s been to since the 1980’s.

String perturbation theory is an important subject, but it’s not one many string theorists use. And the reason why is that, oddly enough, very few string theorists actually use strings.

Looking at arXiv as I’m writing this, I can see only one paper in the theoretical physics section that directly uses strings. Most of them use something else: either older concepts like black holes, quantum field theory, and supergravity, or newer ones like d-branes. If you talked to the people who wrote those papers, though, most of them would describe themselves as string theorists.

The reason for the disconnect is that string theory as a field is much more than just the study of strings. String theory is a ten-dimensional universe (or eleven with M theory), where different ways of twisting up some of the dimensions result in different apparent physics in the remaining ones. It’s got strings, but also higher-dimensional membranes (and in the eleven dimensions of M theory it only has membranes, not strings). It’s the recipe for a long list of exotic quantum field theories, and a list of possible relations between them. It’s a new way to look at geometry, to think about the intersection of the nature of space and the dynamics of what inhabits it.

If string theory were really just about strings, it likely wouldn’t have grown any bigger than its quantum gravity rivals, like Loop Quantum Gravity. String theory grew because it inspired research directions that went far afield, and far beyond its conceptual core.

That’s part of why most string theorists will be baffled if you insist that string theory needs proof, or that it’s not the right approach to quantum gravity. For most string theorists, it doesn’t matter whether we live in a stringy world, whether gravity might eventually be described by another model. For most string theorists, string theory is a tool, one that opened up fields of inquiry that don’t have much to do with predicting the output of the LHC or describing the early universe. Or, in many cases, actually using strings.