Economists must find academics confusing.

When investors put money in a company, they have some control over what that company does. They vote to decide a board, and the board votes to hire a CEO. If the company isn’t doing what the investors want, the board can fire the CEO, or the investors can vote in a new board. Everybody is incentivized to do what the people who gave the money want to happen. And usually, those people want the company to increase its profits, since most of them people are companies with their own investors).

Academics are paid by universities and research centers, funded in the aggregate by governments and student tuition and endowments from donors. But individually, they’re also often funded by grants.

What grant-givers want is more ambiguous. The money comes in big lumps from governments and private foundations, which generally want something vague like “scientific progress”. The actual decision of who gets the money are made by committees made up of senior scientists. These people aren’t experts in every topic, so they have to extrapolate, much as investors have to guess whether a new company will be profitable based on past experience. At their best, they use their deep familiarity with scientific research to judge which projects are most likely to work, and which have the most interesting payoffs. At their weakest, though, they stick with ideas they’ve heard of, things they know work because they’ve seen them work before. That, in a nutshell, is why mainstream research prevails: not because the mainstream wants to suppress alternatives, but because sometimes the only way to guess if something will work is raw familiarity.

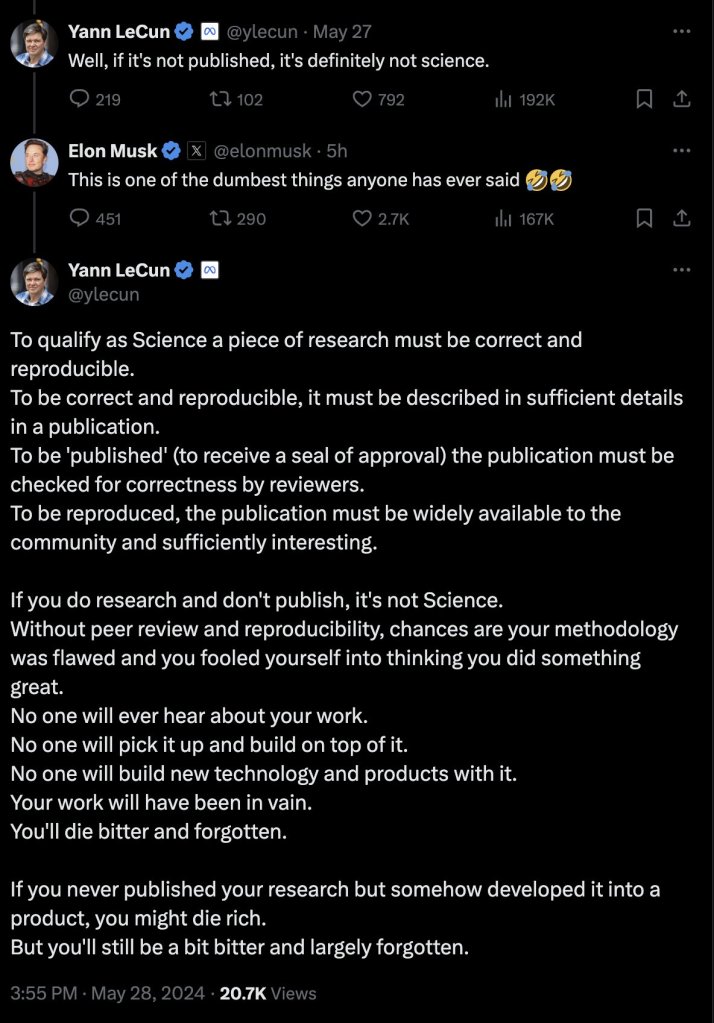

(What “works” means is another question. The cynical answers are “publishes papers” or “gets citations”, but that’s a bit unfair: in Europe and the US, most funders know that these numbers don’t tell the whole story. The trivial answer is “achieves what you said it would”, but that can’t be the whole story, because some goals are more pointless than others. You might want the answer to be “benefits humanity”, but that’s almost impossible to judge. So in the end the answer is “sounds like good science”, which is vulnerable to all the fads you can imagine…but is pretty much our only option, regardless.)

So are academics incentivized to do what the grant committees want? Sort of.

Science never goes according to plan. Grant committees are made up of scientists, so they know that. So while many grants have a review process afterwards to see whether you achieved what you planned, they aren’t all that picky about it. If you can tell a good story, you can explain why you moved away from your original proposal. You can say the original idea inspired a new direction, or that it became clear that a new approach was necessary. I’ve done this with an EU grant, and they were fine with it.

Looking at this, you might imagine that an academic who’s a half-capable storyteller could get away with anything they wanted. Propose a fashionable project, work on what you actually care about, and tell a good story afterwards to avoid getting in trouble. As long as you’re not literally embezzling the money (the guy who was paying himself rent out of his visitor funding, for instance), what could go wrong? You get the money without the incentives, you move the scientific world and nobody gets to move you.

It’s not quite that easy, though.

Sabine Hossenfelder told herself she could do something like this. She got grants for fashionable topics she thought were pointless, and told herself she’d spend time on the side on the things she felt were actually important. Eventually, she realized she wasn’t actually doing the important things: the faddish research ended up taking all her time. Not able to get grants doing what she actually cared about (and, in one of those weird temporary European positions that only lasts until you run out of grants), she now has to make a living from her science popularization work.

I can’t speak for Hossenfelder, but I’ve also put some thought into how to choose what to research, about whether I could actually be an unmoved mover. A few things get in the way:

First, applying for grants doesn’t just take storytelling skills, it takes scientific knowledge. Grant committees aren’t experts in everything, but they usually send grants to be reviewed by much more appropriate experts. These experts will check if your grant makes sense. In order to make the grant make sense, you have to know enough about the faddish topic to propose something reasonable. You have to keep up with the fad. You have to spend time reading papers, and talking to people in the faddish subfield. This takes work, but also changes your motivation. If you spend time around people excited by an idea, you’ll either get excited too, or be too drained by the dissonance to get any work done.

Second, you can’t change things that much. You still need a plausible story as to how you got from where you are to where you are going.

Third, you need to be a plausible person to do the work. If the committee looks at your CV and sees that you’ve never actually worked on the faddish topic, they’re more likely to give a grant to someone who’s actually worked on it.

Fourth, you have to choose what to do when you hire people. If you never hire any postdocs or students working on the faddish topic, then it will be very obvious that you aren’t trying to research it. If you do hire them, then you’ll be surrounded by people who actually care about the fad, and want your help to understand how to work with it.

Ultimately, to avoid the grant committee’s incentives, you need a golden tongue and a heart of stone, and even then you’ll need to spend some time working on something you think is pointless.

Even if you don’t apply for grants, even if you have a real permanent position or even tenure, you still feel some of these pressures. You’re still surrounded by people who care about particular things, by students and postdocs who need grants and jobs and fellow professors who are confident the mainstream is the right path forward. It takes a lot of strength, and sometimes cruelty, to avoid bowing to that.

So despite the ambiguous rules and lack of oversight, academics still respond to incentives: they can’t just do whatever they feel like. They aren’t bound by shareholders, they aren’t expected to make a profit. But ultimately, the things that do constrain them, expertise and cognitive load, social pressure and compassion for those they mentor, those can be even stronger.

I suspect that those pressures dominate the private sector as well. My guess is that for all that companies think of themselves as trying to maximize profits, the all-too-human motivations we share are more powerful than any corporate governance structure or org chart. But I don’t know yet. Likely, I’ll find out soon.