When I moved back to Denmark, I mentioned that I was planning to do more science journalism work. The first fruit of that plan is up this week: I have a piece at Quanta Magazine about a perennially trendy topic in physics, the S-matrix.

It’s been great working with Quanta again. They’ve been thorough, attentive to the science, and patient with my still-uncertain life situation. I’m quite likely to have more pieces there in future, and I’ve got ideas cooking with other outlets as well, so stay tuned!

My piece with Quanta is relatively short, the kind of thing they used to label a “blog” rather than say a “feature”. Since the S-matrix is a pretty broad topic, there were a few things I couldn’t cover there, so I thought it would be nice to discuss them here. You can think of this as a kind of “bonus material” section for the piece. So before reading on, read my piece at Quanta first!

Welcome back!

At Quanta I wrote a kind of cartoon of the S-matrix, asking you to think about it as a matrix of probabilities, with rows for input particles and columns for output particles. There are a couple different simplifications I snuck in there, the pop physicist’s “lies to children“. One, I already flag in the piece: the entries aren’t really probabilities, they’re complex numbers, probability amplitudes.

There’s another simplification that I didn’t have space to flag. The rows and columns aren’t just lists of particles, they’re lists of particles in particular states.

What do I mean by states? A state is a complete description of a particle. A particle’s state includes its energy and momentum, including the direction it’s traveling in. It includes its spin, and the direction of its spin: for example, clockwise or counterclockwise? It also includes any charges, from the familiar electric charge to the color of a quark.

This makes the matrix even bigger than you might have thought. I was already describing an infinite matrix, one where you can have as many columns and rows as you can imagine numbers of colliding particles. But the number of rows and columns isn’t just infinite, but uncountable, as many rows and columns as there are different numbers you can use for energy and momentum.

For some of you, an uncountably infinite matrix doesn’t sound much like a matrix. But for mathematicians familiar with vector spaces, this is totally reasonable. Even if your matrix is infinite, or even uncountably infinite, it can still be useful to think about it as a matrix.

Another subtlety, which I’m sure physicists will be howling at me about: the Higgs boson is not supposed to be in the S-matrix!

In the article, I alluded to the idea that the S-matrix lets you “hide” particles that only exist momentarily inside of a particle collision. The Higgs is precisely that sort of particle, an unstable particle. And normally, the S-matrix is supposed to only describe interactions between stable particles, particles that can survive all the way to infinity.

In my defense, if you want a nice table of probabilities to put in an article, you need an unstable particle: interactions between stable particles depend on their energy and momentum, sometimes in complicated ways, while a single unstable particle will decay into a reliable set of options.

More technically, there are also contexts in which it’s totally fine to think about an S-matrix between unstable particles, even if it’s not usually how we use the idea.

My piece also didn’t have a lot of room to discuss new developments. I thought at minimum I’d say a bit more about the work of the young people I mentioned. You can think of this as an appetizer: there are a lot of people working on different aspects of this subject these days.

Part of the initial inspiration for the piece was when an editor at Quanta noticed a recent paper by Christian Copetti, Lucía Cordova, and Shota Komatsu. The paper shows an interesting case, where one of the “logical” conditions imposed in the original S-matrix bootstrap doesn’t actually apply. It ended up being too technical for the Quanta piece, but I thought I could say a bit about it, and related questions, here.

Some of the conditions imposed by the original bootstrappers seem unavoidable. Quantum mechanics makes no sense if doesn’t compute probabilities, and probabilities can’t be negative, or larger than one, so we’d better have an S-matrix that obeys those rules. Causality is another big one: we probably shouldn’t have an S-matrix that lets us send messages back in time and change the past.

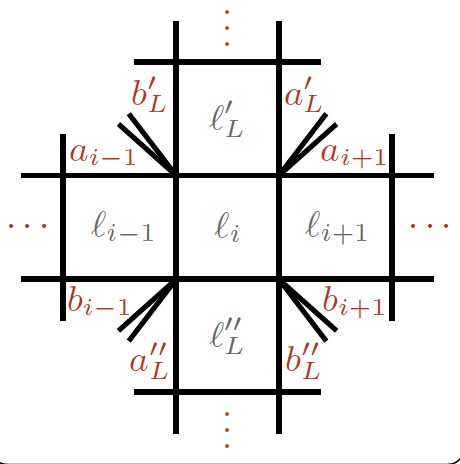

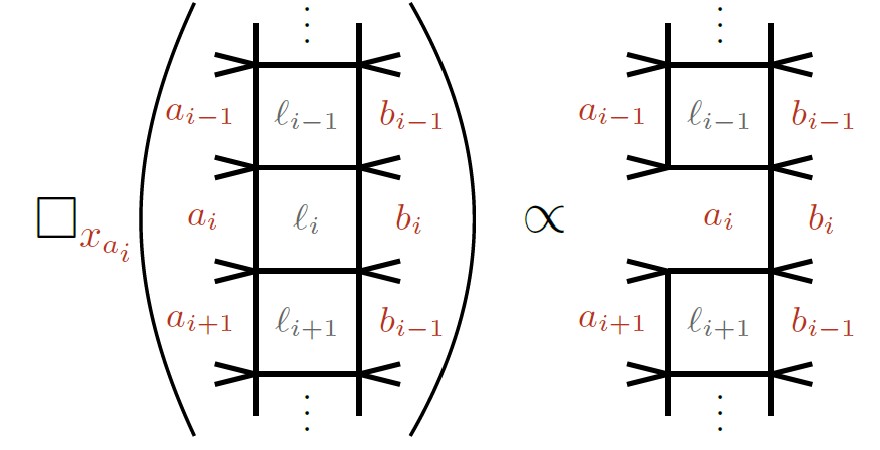

Other conditions came from a mixture of intuition and observation. Crossing is a big one here. Crossing tells you that you can take an S-matrix entry with in-coming particles, and relate it to a different S-matrix entry with out-going anti-particles, using techniques from the calculus of complex numbers.

Crossing may seem quite obscure, but after some experience with S-matrices it feels obvious and intuitive. That’s why for an expert, results like the paper by Copetti, Cordova, and Komatsu seem so surprising. What they found was that a particularly exotic type of symmetry, called a non-invertible symmetry, was incompatible with crossing symmetry. They could find consistent S-matrices for theories with these strange non-invertible symmetries, but only if they threw out one of the basic assumptions of the bootstrap.

This was weird, but upon reflection not too weird. In theories with non-invertible symmetries, the behaviors of different particles are correlated together. One can’t treat far away particles as separate, the way one usually does with the S-matrix. So trying to “cross” a particle from one side of a process to another changes more than it usually would, and you need a more sophisticated approach to keep track of it. When I talked to Cordova and Komatsu, they related this to another concept called soft theorems, aspects of which have been getting a lot of attention and funding of late.

In the meantime, others have been trying to figure out where the crossing rules come from in the first place.

There were attempts in the 1970’s to understand crossing in terms of other fundamental principles. They slowed in part because, as the original S-matrix bootstrap was overtaken by QCD, there was less motivation to do this type of work anymore. But they also ran into a weird puzzle. When they tried to use the rules of crossing more broadly, only some of the things they found looked like S-matrices. Others looked like stranger, meaningless calculations.

A recent paper by Simon Caron-Huot, Mathieu Giroux, Holmfridur Hannesdottir, and Sebastian Mizera revisited these meaningless calculations, and showed that they aren’t so meaningless after all. In particular, some of them match well to the kinds of calculations people wanted to do to predict gravitational waves from colliding black holes.

Imagine a pair of black holes passing close to each other, then scattering away in different directions. Unlike particles in a collider, we have no hope of catching the black holes themselves. They’re big classical objects, and they will continue far away from us. We do catch gravitational waves, emitted from the interaction of the black holes.

This different setup turns out to give the problem a very different character. It ends up meaning that instead of the S-matrix, you want a subtly different mathematical object, one related to the original S-matrix by crossing relations. Using crossing, Caron-Huot, Giroux, Hannesdottir and Mizera found many different quantities one could observe in different situations, linked by the same rules that the original S-matrix bootstrappers used to relate S-matrix entries.

The work of these two groups is just some of the work done in the new S-matrix program, but it’s typical of where the focus is going. People are trying to understand the general rules found in the past. They want to know where they came from, and as a consequence, when they can go wrong. They have a lot to learn from the older papers, and a lot of new insights come from diligent reading. But they also have a lot of new insights to discover, based on the new tools and perspectives of the modern day. For the most part, they don’t expect to find a new unified theory of physics from bootstrapping alone. But by learning how S-matrices work in general, they expect to find valuable knowledge no matter how the future goes.