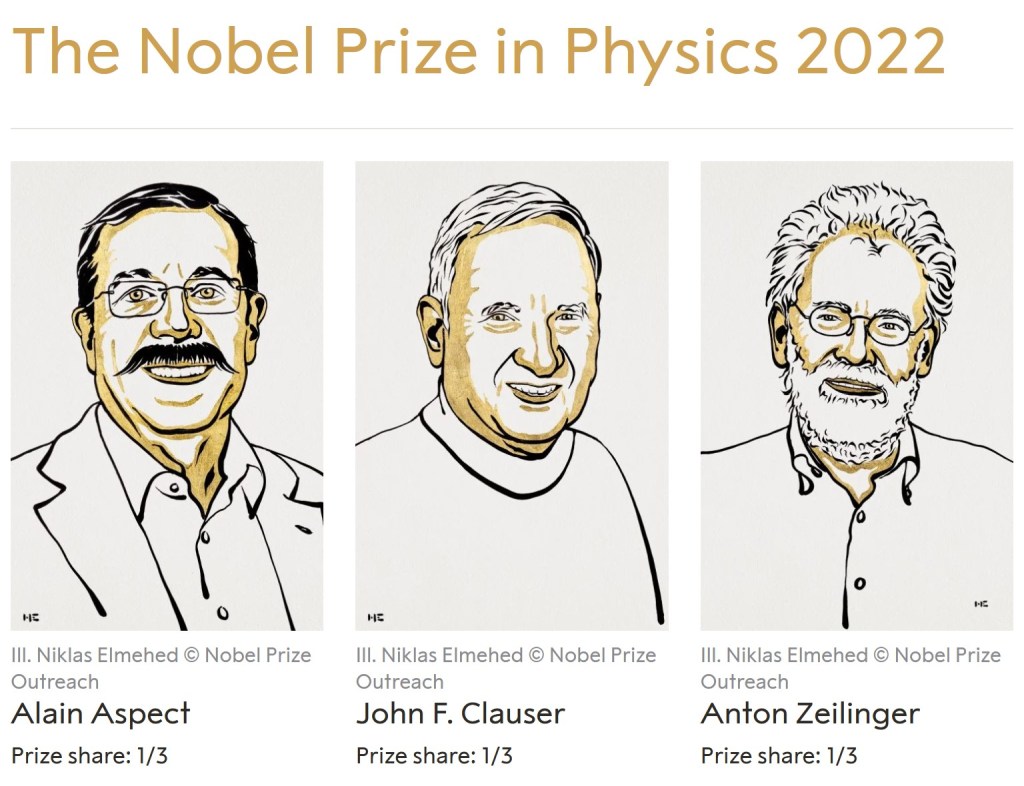

The 2022 Nobel Prize was announced this week, awarded to Alain Aspect, John F. Clauser, and Anton Zeilinger for experiments with entangled photons, establishing the violation of Bell inequalities and pioneering quantum information science.

I’ve complained in the past about the Nobel prize awarding to “baskets” of loosely related topics. This year, though, the three Nobelists have a clear link: they were pioneers in investigating and using quantum entanglement.

You can think of a quantum particle like a coin frozen in mid-air. Once measured, the coin falls, and you read it as heads or tails, but before then the coin is neither, with equal chance to be one or the other. In this metaphor, quantum entanglement slices the coin in half. Slice a coin in half on a table, and its halves will either both show heads, or both tails. Slice our “frozen coin” in mid-air, and it keeps this property: the halves, both still “frozen”, can later be measured as both heads, or both tails. Even if you separate them, the outcomes never become independent: you will never find one half-coin to land on tails, and the other on heads.

Einstein thought that this couldn’t be the whole story. He was bothered by the way that measuring a “frozen” coin seems to change its behavior faster than light, screwing up his theory of special relativity. Entanglement, with its ability to separate halves of a coin as far as you liked, just made the problem worse. He thought that there must be a deeper theory, one with “hidden variables” that determined whether the halves would be heads or tails before they were separated.

In 1964, a theoretical physicist named J.S. Bell found that Einstein’s idea had testable consequences. He wrote down a set of statistical equations, called Bell inequalities, that have to hold if there are hidden variables of the type Einstein imagined, then showed that quantum mechanics could violate those inequalities.

Bell’s inequalities were just theory, though, until this year’s Nobelists arrived to test them. Clauser was first: in the 70’s, he proposed a variant of Bell’s inequalities, then tested them by measuring members of a pair of entangled photons in two different places. He found complete agreement with quantum mechanics.

Still, there was a loophole left for Einstein’s idea. If the settings on the two measurement devices could influence the pair of photons when they were first entangled, that would allow hidden variables to influence the outcome in a way that avoided Bell and Clauser’s calculations. It was Aspect, in the 80’s, who closed this loophole: by doing experiments fast enough to change the measurement settings after the photons were entangled, he could show that the settings could not possibly influence the forming of the entangled pair.

Aspect’s experiments, in many minds, were the end of the story. They were the ones emphasized in the textbooks when I studied quantum mechanics in school.

The remaining loopholes are trickier. Some hope for a way to correlate the behavior of particles and measurement devices that doesn’t run afoul of Aspect’s experiment. This idea, called, superdeterminism, has recently had a few passionate advocates, but speaking personally I’m still confused as to how it’s supposed to work. Others want to jettison special relativity altogether. This would not only involve measurements influencing each other faster than light, but also would break a kind of symmetry present in the experiments, because it would declare one measurement or the other to have happened “first”, something special relativity forbids. The majority, uncomfortable with either approach, thinks that quantum mechanics is complete, with no deterministic theory that can replace it. They differ only on how to describe, or interpret, the theory, a debate more the domain of careful philosophy than of physics.

After all of these philosophical debates over the nature of reality, you may ask what quantum entanglement can do for you?

Suppose you want to make a computer out of quantum particles, one that uses the power of quantum mechanics to do things no ordinary computer can. A normal computer needs to copy data from place to place, from hard disk to RAM to your processor. Quantum particles, however, can’t be copied: a theorem says that you cannot make an identical, independent copy of a quantum particle. Moving quantum data then required a new method, pioneered by Anton Zeilinger in the late 90’s using quantum entanglement. The method destroys the original particle to make a new one elsewhere, which led to it being called quantum teleportation after the Star Trek devices that do the same with human beings. Quantum teleportation can’t move information faster than light (there’s a reason the inventor of Le Guin’s ansible despairs of the materialism of “Terran physics”), but it is still a crucial technology for quantum computers, one that will be more and more relevant as time goes on.

The settings of detectors affecting entanglement of particles about to be detected sounds a bit like malicious deities sabotaging our experiments to me. Then again, Liu Cixin’s Three-body Problem had something similar so at least someone finds it plausible.

LikeLiked by 1 person

4 gravitons,

„Some hope for a way to correlate the behavior of particles and measurement devices that doesn’t run afoul of Aspect’s experiment. This idea, called, superdeterminism, has recently had a few passionate advocates, but speaking personally I’m still confused as to how it’s supposed to work.”

Superdeterminism means that the hidden variable and the settings of the detectors are not independent parameters.

In Aspect’s experiment the hidden variables are the polarization of the photons (EM waves) emitted by the source. The settings of the detectors are their spatial orientation, which, at fundamental level is nothing else but the distribution/momenta of their internal particles (electrons and quarks).

The polarization of an EM wave is determined by the way the charge (electron) accelerates at the locus of the emission.

The acceleration of the electron is determined by the forces acting on it, the most important being the Lorentz force.

The Lorentz force is determined by the EM field configuration at that location, which, according to Maxwell’s equations, is determined by the global charge distribution/momenta.

So, according to classical theory of electromagnetism, the hidden variable and the global charge distribution/momenta are not independent variables. But the electrons and quarks inside the detectors are part of the global charge distribution/momenta, so we can conclude that superdeterminism is a direct, expected consequence of the classical theory of electromagnetism. No other assumption is necessary. There is nothing mysterious about this.

Let me address now two arguments against superdetermininism:

Superdeterminism implies some finely tuned conditions at the Big-Bang. Scott Aaronson is very fond of this claim.

As you can see in my above explanation, superdeterminism is a direct consequence of classical electromagnetism. As long as the Big-Bang does not lead to a violation of Maxwell’s equations, or Lorentz force equation, or Newton’s equations during a Bell test it is completely irrelevant. Any initial conditions are fine.

Superdeterminism means that we should reject the results of double-blind tests, so we need to reject the scientific method. Tim Maudlin and Mateus Araújo are proponents of this argument.

Superficially it looks like they are right. After all, doctors and patients and all the required devices are made of electrons and quarks, charged particles. Since those particles must obey the equations of classical electromagnetism one should reject the independence assumption in this case as well. Only that we shouldn’t.

The particularity of a Bell test is that the measurement result, which is determined by the hidden variable, is sensitive to the field configuration at the locus of the emission. Since this field configuration is correlated with the global charge distribution, independence fails.

What is typically measured in a medical test is some statistical/macroscopic property, like the concentration of a chemical in the blood. This concentration does not change if the EM field configuration changes. What changes is the position/momenta of those molecules – parameters that are irrelevant for the result of the test. So, in this case, independence assumption is justified. There is no conflict between superdeterminism and the scientific method.

LikeLike

A couple things:

First, you in particular think of superdeterminism as working based on classical electromagnetism. To clarify for readers, this is not how the main physicists working on it today (the ones that I’m aware of are Gerard ‘t Hooft, Sabine Hossenfelder, and Tim Palmer) think about it, and it was mostly their setups where I am confused about how they are supposed to work.

Andrei, we’ve had a lot of discussions about your particular picture, but let me state something that I think I haven’t said before: detector settings are statistical/macroscopic properties. The same logic you apply to medical tests also applies to Bell tests, because of Newton’s third law.

LikeLiked by 1 person

4gravitons,

My take on superdeterminism (using classical EM) can be generalized for any theory that involves long-range interactions.

The particular form of the equations describing the theory is not important. What’s important is that:

Long-range interactions exist.

Those interactions are relevant for the parameter that is being measured.

‘t Hooft’s model is a discrete field theory so it involves such long-range interactions and this is why his model is superdeterministic. I am less familiar with Palmer-Hossenfelder model, but it still makes use of the fact that some states are impossible to achieve so they could alter the so-called “classical prediction” where the inequality is not violated.

True, the detectors’ settings are macroscopic, but the hidden variable is not. You cannot rotate the detector and keep the same charge distribution/momenta inside the detectors, so a macroscopic rotation of the polarizer would require a corresponding change of the state of the source, so a change of the hidden variable.

LikeLike

JollyJoker,

“The settings of detectors affecting entanglement of particles about to be detected sounds a bit like malicious deities sabotaging our experiments to me.”

Please read my above post to 4 gravitons for a detailed description of how superdeterminism works.

Your mention of the 3-body problem is interesting. I didn’t read that book but one can also understand superdeterminism in this way.

Fundamentally, the source and detectors are just large aggregates of charged particles (electrons and quarks). All of them interact, regardless of the distance, since the EM interaction is infinite in range. That means that only those states that are solutions of the corresponding N-body problem (where N is the number of electrons and quarks involved) are possible. This means the independence assumption must be false. If you keep the field configuration at the source the same (so that the polarization, the hidden variable, remains the same) and change the charge distribution at the detector (by rotating it) you end up with a state that violates Maxwell’s equations, a state that is not a solution of the N-body problem. Such a state is impossible to achieve. Since Bell’s theorem does not make any effort to eliminate such impossible states from the statistics (by assuming independence), one can reject its validity when long range forces are present.

LikeLike

I’m quite late to this post , but anyway..

I’m afraid that superdeterminism is a non- starter! It’s not a respectable alternative to the standard QM like e.g. Pilot wave or Physical Collapse theories. These have their own issues , but, at least they’re well defined and in some cases ( GRW or Diosi/ Penrose) they even make estimations about possible deviations from the standard theory.

Superdeterminism,on the other hand, works by rejecting statistical independence, one of the most basic assumptions of any kind of ” experimental science”.

It works by imposing infinitely precise ( fine tuned) initial conditions. Note that anything less than that is not sufficient:

Even the slightest deviations will be enough to destroy any correlations very quickly ( and such proposals, that require such an extreme fine tuning are considered unphysical, already in classical physics..) .

We’re talking about a very big, complicated, old, expanding universe that has past ” particle horizons”, not a toy model with a few classical particles..

The existence of past horizons makes this basic premise of superdeterminism totally implausible, because as we’re going backwards , near the beginning of our universe , more and more ” distant” events become causally isolated, so there is no ” common cause” that could correlate them all!

There is also the ” coincidence ” that these correlations have to ” mimic” , with unbelievable accuracy, the predictions of standard QM, because Bell’s inequalities are violated exactly as QM predicts! It could have been otherwise..

Good scientific proposals ( like standard QM or GR) , having only a few assumptions , give a vastly rich outcome: they’re prolific.

With superdeterminism is the opposite: too many coincidences, zero explanatory power. “Who ordered” , by the way, these contrived initial conditions? Does Nature wants to cheat us, somehow?!

By the way, if “you” are rejecting statistical independence (SI) or using “double criteria” , others can do the same with “your” proposals:

Whatever experiment you’ll think of, they’ll reject it with the same “logic” as in superdeterminism. No need for experiments anymore..as in pseudoscience!

LikeLike

One thing that confuses me about this topic is Hossenfelder seems to make a big deal about only needing the particles to be correlated with the measurement apparati at the time of measurement, which seems to be intended as a way around the initial conditions problem. (Arguing that you don’t need to have your decisions correlated with earlier states of the particles, for example.) This baffles me because I have no idea how you could get the former type of correlations without the latter, without destroying the very notion of causality they’re trying to preserve. But if they’ve got some way to do it it’s a way around a few of the usual objections at least.

LikeLiked by 1 person

4gravitons (Matt)

Correct me if I’m misinterpreting your response, but exactly these kind of ” local hidden variable” theories are rejected by the experiments that are testing Bell’s theorem! If we didn’t need any contrived initial conditions but only a locally deterministic theory then things would have been very different anyway.

Superdeterminism has to do with these following explicit ( or sometimes “hidden”) assumptions:

1) That the fundamental theory is , at least locally, strictly deterministic.

2) That spacetime is ( semi-classically ) globally hyperbolic, so we can specify our data on a (suitable) spacelike hypersurface.

3) That these initial conditions have to be:

3a) Fine tuned, with infinite precision ( no perturbations are allowed).

3b) “Cooked” in such a way as to alter which experiments are done and which are not and/ or how they’re performed ( so they’re giving us the ” wrong statistics”, lets say, as if Nature -or whatever- is trying to cheat us..). There’s a handwavy element in all that: Proponents of superdeterminism do not always clarify how’s that happening and I suspect that there cannot be, anyway, a unique specific model for such a thing..

This is the infamous rejection of ” statistical independence”(SI) that is supposedly related to the mysteriously cooked initial conditions. By the way, there is a kind of “double criteria” involved here, because SI is supposedly violated only for specific kind of experiments that have to do with entanglement and not for others ( that considered ” macroscopic”). Of course one could point out that the devices and set ups in all experiments are macroscopic and our theory is considered ” fundamental”, so , in principle, if SI is rejected and reductionism holds, then no experiment could be trusted.

The next step for a superdeterminist is to reject reductionism and try to find a holistic theory that explains how SI holds for some experiments and not for others ( I wish them good luck with that..)

3c) The final nail is that the Bell inequalities could have been violated in many different ways ( or even randomly). But they are violated exactly as QM predicts..

Sorry for the lengthy response ( and some repetitions in clarifying arguments).

LikeLike

I don’t get the impression that Hossenfelder’s specific “double criteria” involve pointing to some things as “macroscopic” and others not (unlike, say, Andrei’s comments here). But I agree that your point 3) is the crux of the problem. I think Hossenfelder would probably reject any notion of fine-tuning you’re using in 3a), since she rejects the notion that we can judge some initial conditions to be more fine-tuned than others (see her opinions on naturalness, but especially on the flatness and horizon problems in cosmology). But I don’t know how she evades 3b), and some of her phrasing makes it sound like she thinks she’s avoiding 2) instead.

Basically, what you say here is essentially my understanding of the issue as well. I’ve read some, but certainly not most, of her writing on this topic, and it makes it sound like she thinks she has some way around some of it, but I can’t tell how much that is self-deception (/actual deception) versus actually having an argument that gets around it.

LikeLike

Yeah, I’ve heard these arguments about fine tuning. But there is a big difference between the flatness problem or particle physics’ naturalness and an infinitely fine tuned set of initial conditions:

It is the difference between something that seems improbable or implausible at first ( although there is presumably something else that explains it ) and something that is totally unphysical or even impossible.

The universe does not have to be exactly flat , the horizon problem can be solved by inflation, etc. ( From these “finite fine tuning” problems it is, perhaps, the cosmological constant issue the most tough).

The other case is totally different:

From all infinitely many conceivable sets of initial conditions that are otherwise allowed, we have to restrict our universe to a set of measure zero, and this has to be imposed by hand. And this has to give the same correlations in distant , causally separated, regions of spacetime as in a miracle ( or a weird joke , as in a Philip K. Dick science fiction story).

A slight deviation from this will give wrong results in experiments. And I don’t know any ” anthropic” argument that could ameliorate this..

LikeLike

I think she’d argue that any claim that you know the measure on the space of initial conditions is presumptuous, because initial conditions aren’t drawn from a probability distribution, they’re just a brute fact we have to infer from data.

But yeah, I think if you make that a fundamental tent-pole of your approach then you give up all hope of actually getting predictions that couldn’t already be made with normal QM, so to the extent that she’s claiming she might be able to do that presumably she has another answer as well. What it is, I have no idea.

LikeLike

Dimitris Papadimitriou

“Superdeterminism,on the other hand, works by rejecting statistical independence, one of the most basic assumptions of any kind of ” experimental science”.”

Statistical independence (SI) is not a “basic assumption” in science. Different properties of 2 or more systems can be independent or not, depending on the kind of interactions that take place between them.

A pair of orbiting stars interact gravitationally, so their state must be a solution to the gravitational 2 body problem. Their velocities for example would not be independent, the velocity vectors have to be in the same plane. No scientist would assume that those velocities are independent parameters, yet, somehow, the scientific method survived.

We can generalize this situation to N bodies. The state of such a system has to be a solution to the corresponding N-body problem. So, again, the position and velocity of the Ni object would not be independent of the positions/velocities of the other N-1 objects. If you want to change the velocity of Ni in some way, while keeping the positions/velocities of the other objects the same you will end up with a state that is not a solution to the N-body problem, so it’s an impossible state.

SI is assumed in those situations where no interaction exists or, the interaction is not relevant to what is being measured. I claim that in the case of Bell tests such a relevant interaction exists. It’s the electromagnetical interaction between the electrons and nuclei that make up the source/detectors. In this case SI is not true.

“It works by imposing infinitely precise ( fine tuned) initial conditions.”

This is false. The so-called “superdeterministic” correlations are a result of the interaction between the source and detectors., just like the correlations between a pair of orbiting stars is a result of their gravitational interaction. The initial conditions are irrelevant.

“Even the slightest deviations will be enough to destroy any correlations very quickly”

And this claim is based on … what?

“The existence of past horizons makes this basic premise of superdeterminism totally implausible, because as we’re going backwards , near the beginning of our universe , more and more ” distant” events become causally isolated, so there is no ” common cause” that could correlate them all!”

I’d like to see some evidence for the existence of those “causally isolated” events.

“There is also the ” coincidence ” that these correlations have to ” mimic” , with unbelievable accuracy, the predictions of standard QM”

The point of superdeterminism is that QM is a statistical approximation of the fundamental, superdeterministyic theory. It’s like saying that the velocities of the molecules of a gas have to ” mimic” , with unbelievable accuracy the prediction of thermodynamics for the temperature of that gas.

“Good scientific proposals ( like standard QM or GR) , having only a few assumptions , give a vastly rich outcome: they’re prolific.”

The problem with the standard QM is that it is either non-local or incomplete (EPR). If it’s non-local, it conflicts with relativity, a big deal. If it’s incomplete, there is a single way to complete it, that way being superdeterminism. And no, QFT is not local, try finding a local account for the measurement (wave collapse).

“With superdeterminism is the opposite: too many coincidences, zero explanatory power.”

There is no reason to postulate any coincidence. SI can be shown to be false by simply looking at the physics involved.

“By the way, if “you” are rejecting statistical independence (SI) or using “double criteria” , others can do the same with “your” proposals:

Whatever experiment you’ll think of, they’ll reject it with the same “logic” as in superdeterminism. No need for experiments anymore..as in pseudoscience!”

Not true. Superdeterminism does not deny SI in all situations. It only denies it for Bell tests (and some other “quantum” experiments.

All objects interact electromagnetically since they consist of charged particles (electrons and nuclei). This implies that the internal states of all objects are correlated (they need to satisfy the corresponding N-body problem, where N is the number of charges involved in the experiment). However, these correlations disappear at macroscopic level for statistical reasons (the EM forces cancel out for large, neutral objects). So, one can assume SI in those situations, which include medical/biological tests, chemistry, mechanics, etc.

The hidden variable in a Bell test (polarisation of the emitted EM waves/photons) are indicative to the exact sate of tha atom, so it has to be correlated with the state of all ather charges, including those in detectors.

LikeLike

Andrei

I don’t know where to start or where to end:

The main point with these experimental tests of the Bell’s inequalities was to rule out for good any such loophole. After 2015 the last loophole is closed.

Local hidden variable theories are exactly these kind of theories that have been falsified. Superdeterminism is the desperate and implausible attempt to keep such theories ” alive”, somehow.

Among the very few proponents of superdeterminism, General Relativists or Cosmologists are nowhere to be found. This is at odds with what you seemingly believe, but is exactly what is really expected :

Every introductory textbook about General Relativity has chapters on Cosmology. There you can find all the basic Cosmological models, their conformal diagrams and detailed information about Cosmological horizons.

Look especially for the definition of past ” particle horizons”. Then check the relevant conformal diagrams that show clearly the causal structure.

You’ll see yourself how distant events become causally isolated near the bottom line ( that represents the big bang).

(Amusingly, there is a way to circumvent this ‘past horizon’ problem, somehow, by invoking .. inflation!

But unfortunately there is no convincing anthropic argument that’s related to Bell’s theorem and besides that , inflation already presupposes QM and GR, so in that case your alternative theory cannot be considered fundamental.)

So, if you want to avoid inconsistencies you need to adjust, with infinite precision, your data, on any arbitrary Cauchy hypersurface in such a way that after billions of years, experiments that are performed here on Earth will violate Bell’s inequalities as standard QM predicts ( see my previous comments, I won’t repeat myself again ).

There is an old joke about a wife that enters home without warning, goes to the bedroom where she finds her husband with another gal.

” No, wait honey, that’s not what you’re thinking!” says he. ( “Don’t trust your eyes , just believe what you want to believe, or even better, what I want you to believe! ” )

That’s exactly what superdeterminism or other ” conspiracy theories ” are like.

I’m sorry , but I can’t take them seriously.

LikeLike

Andrei

4gravitons

To be fair, my above criticisms are for the common “definition”/ understanding of superdeterminism as a kind of a” conspiracy theory” (determinism plus fine tuned initial conditions).

The main hype started several years ago with the campaign against ” free will”.

That was a typical ” category mistake” ( like arguing that our thoughts or a musical composition e.g. ” do not exist”) that later was abandoned gradually and replaced by the more “scientific sounding” rejection of statistical independence.

More recently there is another shift that has to do with the need for fine tuning. Perhaps there is a need for changing label , if really this change of goalposts corresponds to a fundamentally different kind of proposal.

But I’m not sure that this is the case and I don’t see how these new models are supposed to evade Bell and experiments:

As I stated before, deterministic local hidden variable theories are already excluded. Detector devices can be spacelike separated and any influence from the ” source of the entangled pairs” or the local hidden variables cannot evade the theorem. There is also the influence from the past light cones for each lab/ detector that can randomise them easily (without imposing some extremely fine tuned initial conditions), so I don’t see any real difference. We don’t live in a toy model..

In standard QM, the usual mild non separability ( that does not violate relativistic locality/ Causality, by the way, contrary to the claims) is enough.

On the other, how could we ever find positive evidence for a ” superdeterminist model” without a violation of no-signaling?

LikeLike

Dimitris Papadimitriou,

I’ve already replied, but the comment got lost. So let me answer it again.

“my above criticisms are for the common “definition”/ understanding of superdeterminism as a kind of a” conspiracy theory” (determinism plus fine tuned initial conditions).”

The problem is that this never was the definition of superdeterminism. According to Bell, superdeterminism is the denial of the statistical independence assumption, meaning that the settings of the detectors and the hidden variables are not independent parameters. Now, there are more ways to achieve that, and introducing conspiracies could be such a way, but it’s not the only one.

“As I stated before, deterministic local hidden variable theories are already excluded.”

Again, superdeterminism is a particular type of a deterministic theory, so, deterministic theories are not all excluded. In fact, any deterministic theory containing long-range interactions (electromagnetism, gravity) is superdeterministic.

“Detector devices can be spacelike separated and any influence from the ” source of the entangled pairs” or the local hidden variables cannot evade the theorem.”

This is a wrong way to look at the situation. If you look at this experiment at the fundamental level it’s just 3 large groups of charged particles (source+detectors) interacting as they should, electromagnetically. Since every particle interacts with any other particle, regardless of the distance, the whole system must be in a state that is a solution to the corresponding N body problem, where N is the number of particles involved (10^30 or so). If you want to keep the hidden variable the same you need to keep the state of the source the same. Let’s say you do that but now change the state of the detector so that it corresponds to its new orientation. Do you think that this new state would also be a solution to the N-body problem? I think not. That means that the hidden variable and the detector settings are not independent variables. You change one, you need also change the other so that the new state is a valid one.

“In standard QM, the usual mild non separability ( that does not violate relativistic locality/ Causality, by the way, contrary to the claims) is enough.”

I disagree. Consider the EPR-Bohm setup (with spins) and detectors fixed on Z. You measure A and get spin-UP. QM tells you that the state of B is now spin-DOWN. Introduce the locality condition – the A measurement does not change the state of B. So, it logically follows that the state of B before the A measurement had to be spin-DOWN as well (otherwise it changed). So, locality +QM implies that the measured spin of B was predetermined, it was spin-DOWN since emission. But this is different from QM description of the pre-measurement state which is a superposition UP/DOWN with 0.5 probability each. So, either you “improve” QM by adding the pre-measurement state (hidden variables) or accept non-locality.

Let’s now see how “mild” this non-locality is. Sure, you cannot send a message this way, since the results are beyond your control. However, you introduce an asymmetry. You need to define a “first” measurement, say it’s A which might be random, and take B as instantly determined by A. The problem is that relativity cannot order space-like events like that and you would have observers contradicting each other. To solve this you need an absolute frame of reference, which means a rejection of relativity. This is what Bohmians are trying to do.

“On the other, how could we ever find positive evidence for a ” superdeterminist model” without a violation of no-signaling?”

I think the best way is to propose a superdeterministic model that gives QM in its limit. That evidence should be enough.

LikeLike

Dimitris Papadimitriou,

“Among the very few proponents of superdeterminism, General Relativists or Cosmologists are nowhere to be found.”

This is false, ‘t Hooft is an example of such physicist.

“You’ll see yourself how distant events become causally isolated near the bottom line ( that represents the big bang).”

We do not have a theory of the Big-bang so we do now the state after the Big-bang. I see no reason to assume that this state did not obey Maxwell’s equations. If it did, we know that this state will lead to a present state that also obey those equations, so there never were “causally isolated” regions. This is consistent with our observations that the universe is smoother than it should and we don’t need to invent new fields (inflaton).

“So, if you want to avoid inconsistencies you need to adjust, with infinite precision, your data, on any arbitrary Cauchy hypersurface in such a way that after billions of years, experiments that are performed here on Earth will violate Bell’s inequalities as standard QM predicts”

No adjustment is necessary. If you start with a proper state (a state that satisfies Maxwell’s and Einstein’s equations) you are guaranteed to end up now with a proper state as well. In the moment you want to impose the independence assumption you will get to a state that does not satisfy the relevant equations, so it’s unphysical.

By rejecting SI, superdeterminism restores consistency and gives you back locality as a bonus. What’s not to like?

LikeLike

Andrei

As briefly as possible:

– Gerard t’Hooft has worked in many areas, but he’s famously a Nobel prize winner particle physisist.

– General Relativity is certainly not a” superdeterminist theory”.

– There aren’t actually any superdeterminist theories. Only several , mostly contrived, models.

-The existence of Past particle horizons in cosmological models ( that are relevant to our universe) is , mainly, a consequence of the expansion and the finite light speed. No controversy here..

– Talking about mild non-locality I was referring to standard QM, not pilot wave theories.

– Quantum measurements have also mild ( in QM) non-local characteristics.

– That’s not the case in alternative theories ( like hidden variables) where serious conflict with Relativity occurs.

– Even in Many Worlds ( which is wavefunction realist ) there is an issue with the ” splitting” of the semi-classical worlds ( is it local or global? Both options seem problematic).

– Except perhaps for simplistic toy models, I very much doubt that any version of a deterministic local hidden variable model will reproduce the same results as QM in an old, huge ( perhaps spatially infinite) universe, without precise fine tuning or extreme non-locality, that violates Relativity ( so no motivation for it against QM, anyway).

LikeLike

Dimitris Papadimitriou,

“The existence of Past particle horizons in cosmological models ( that are relevant to our universe) is , mainly, a consequence of the expansion and the finite light speed. No controversy here..”

OK, I agree that are regions which cannot exchange signals because of the universal expansion. What I do not agree is that those regions have independent/uncorrelated states. The evolution of the universe is described by a deterministic theory (GR). If you let this theory evolve the initial state (at Big-bang) it has to lead to a state that, obviously, satisfies its equations.

The only way to end up with uncorrelated regions is to introduce a genuinely random process after the regions became disconnected. But, of course, I have no reason to accept that any such random events exist. In the absence of such an event, the disconnected regions would keep preserving the correlations that existed since the Big bang.

“Talking about mild non-locality I was referring to standard QM, not pilot wave theories.”

So did I.

“Quantum measurements have also mild ( in QM) non-local characteristics.”

And I have provided an argument why this non-locality is not “mild at all”. Please read again my argument based on the EPR experiment. The only way to keep QM consistent with relativity is to add hidden variables. There is no other way.

“Even in Many Worlds ( which is wavefunction realist ) there is an issue with the ” splitting” of the semi-classical worlds ( is it local or global? Both options seem problematic).”

My issue with MWI is that it seems impossible to provide a justification for the Born rule, so I see no reason to take it seriously.

LikeLike

The issues with determinism in GR are very interesting and subtle. The situation is not so simple as it seems:

The theory is considered locally deterministic but it is not necessarily globally so.

Spacetime solutions that are called ” globally hyperbolic” have the property that we can define our data on spacelike Cauchy hypersurfaces ( slices that have some properties, e.g. they don’t intersect singularities), but , otherwise, they can be chosen rather arbitrarily ( there’s no need for such slice to be ” close to the big bang ” in Cosmology, for example).

GR solutions are not generically restricted to specific initial conditions (*), so GR is not “superdeterminist”, contrary to misconceptions you might have heard.

For example, you can have a simple solution (that describes approximately on a large scale a universe) that is compatible “on average” with a large variety of initial conditions that describe the details on a “local” level. If you perturb a set of initial conditions you end up with another that may be very different on small or intermediate scales, but it looks the same on large ones.

We can have also “non globally hyperbolic” spacetimes that do not admit such “nice slices” everywhere we like ( they have Cauchy horizons that are associated with e.g. timelike (naked) singularities or closed timelike curves etc).

Such solutions are not globally deterministic!

(If we enter semi-classical gravity, spacetimes with evaporating black holes are non globally hyperbolic [as in our real universe! ] and that’s related with the infamous black hole information problem).

So, you see, things are not so easy as they seem at first for determinism in GR. There is still ongoing research about these topics, including rotating black holes, cosmic censorship and all that wonderful stuff.

(*) There are “exotic” situations , like CTCs, or various instabilities, where we can have restricted fine tuned boundary conditions:

Imagine something big and complicated ( like a living organism or a digital camera) on a strictly closed timelike loop.

This loop cannot change! It happens only once for other “external to the loop” observers. There is no memory of a”previous circle, although proper time goes always forward. That’s at odds with thermodynamics.

Even slight perturbations are enough to disturb or break the loop and so , alter dramatically the causal structure.

LikeLike

Andrei

In previous comments I said clearly that quantum mechanical “mild non locality” does not violate relativity. It doesn’t allow for superluminal signaling.

That’s a very common misconception that’s all over the place, so I don’t “blame” you for that.

In pilot wave theories it’s not that “mild” ( that’s clearly stated in the 4gravitons blog post already). These theories need preferred reference frames.

I strongly suspect also that the ” new, improved” version of superdeterminism ( that supposedly does not need fine tuned boundary conditions) requires the same, so non locality (of the superluminal communication kind) enters again the party and cancels the initial motivation about locality..but anyway..

LikeLike

Andrei

Sorry for the come back, but I just spent some time to look more carefully at the 2021 Donadi/ Hossenfelder paper (2010.01327v5 – there’s a link in the blog post).

Look at chapter 3 (“on future input dependence”):

There are signals that transmit information not only from the “source” to the two detector devices but also backwards ! ( from the detectors to the source).

Although the model is supposed to be locally deterministic, this spacetime is “non globally hyperbolic”, exactly what I suspected!

Although it is not explicitly stated, this back and forth exchange of signals between the detectors and the source can only happens if there are closed timelike curves!

So, again, it needs precisely fine tuned boundary conditions..( look my previous relevant comments).

One thing that almost all people that are interested in QM foundations are agreed on, is that whatever interpretation or alternative we choose, there is always a cost. We can’t have our cake and eat it too.

LikeLike

Dimitris Papadimitriou,

„In QM, the “collapse of the wavefunction” is not an objective physical process that happens everywhere instantaneously.”

OK.

„In EPR experiments, there is no absolute temporal order between measurements that are causally separated.”

OK, this means that you accept P2 as true, right?

„The collapse from a measurement in QM is only “relational”, it is “observer dependent” ( observer means anything that acts as a measuring device with any kind of record). This (along with the stochastic element of QM ) is essential for making QM compatible with Special Relativity.”

I don’t follow you here. In my argument we can assume that at each lab we have a computer programmed to perform the measurement at a specified time. Some time later you look at the experimental records (containing the result and measurement time) and notice that the measurements were spacelike and the results are perfectly correlated. This is what needs to be explained.

As far as I can tell there is nothing “observer dependent” about those records and, sure, any observer may check the results by repeating the measurements and he is guaranteed to get the same result.

So, please help me here. My argument only depends on P1 (QM tells you that the state of B is now spin-DOWN) – the perfect correlations all observers can check by looking at the experimental records, and P2 (the A measurement does not change the state of B) – which is the locality condition you seem to agree with. How exactly is this talk about observer dependence relevant for this argument?

„if you insist on globally hyperbolic spacetimes, you need infinitely fine tuned initial conditions.”

What do you mean by finely tuned here? Do you have a theory for the big-bang which makes globally hyperbolic spacetimes unlikely or what?

You can also take this as a test for superdeterminism. If you can prove that we don’t live in a globally hyperbolic spacetime you falsify superdeterminism. The question is, can you?

LikeLike

Dimitris Papadimitriou,

About the GR – determinism issue.

I understand that there are some circumstances where GR could be non-deterministic (naked singularities, timelike curves, etc.). My question is if there is any evidence that such circumstances do actually occur in the visible universe.

“you can have a simple solution (that describes approximately on a large scale a universe) that is compatible “on average” with a large variety of initial conditions that describe the details on a “local” level. If you perturb a set of initial conditions you end up with another that may be very different on small or intermediate scales, but it looks the same on large ones.”

I do not see how is this conflicting with determinism/superdeterminism. The position/momenta of electrons in a chair can be very different while the chair looks microscopically the same. So what? The electrons could still be described by a deterministic theory so that their positions/momenta in the present is uniquely determined by some past state.

About non-locality in standard QM.

Let’s discuss my argument in more detail. The argument is:

“Consider the EPR-Bohm setup (with spins) and detectors fixed on Z. You measure A and get spin-UP. QM tells you that the state of B is now spin-DOWN. Introduce the locality condition – the A measurement does not change the state of B. So, it logically follows that the state of B before the A measurement had to be spin-DOWN as well (otherwise it changed). So, locality +QM implies that the measured spin of B was predetermined, it was spin-DOWN since emission.”

Tell me if you do agree or not that the state of B changes after the A measurement, and if so, would you think that such a change is compatible with SR?

“I strongly suspect also that the ” new, improved” version of superdeterminism ( that supposedly does not need fine tuned boundary conditions) requires the same, so non locality (of the superluminal communication kind) enters again the party and cancels the initial motivation about locality..but anyway..”

My preferred version of superdeterminism is the theory of stochastic electrodynamics. The theory is not advertised as superdeterministic but it is. A recent review is here:

Stochastic Electrodynamics: The Closest Classical Approximation to Quantum Theory

Atoms 7, 29-39 (2019)

https://arxiv.org/abs/1903.00996

The theory is in fact Maxwell’s theory but it takes into account the EM fields generated by all particles in the universe and possible primordial fields. This field is what is measured as Casimir force.

This theory is perfectly local so I disagree that superdeterminism necessarily requires any type of non-locality.

About Hossenfelder’s model.

I don’t know the paper, I’ll try to look at it and come back.

LikeLike

Andrei

The “no-signaling theorem” forbids superluminal communication in standard QM (check it up). In an EPR like experiment, Alice can manipulate her device (e.g. adjust the angle) but she has no way to predict the result of each individual measurement outcome , so she can’t encode a message to Bob using quantum mechanical entanglement. It’s not merely a practical, “engineering” problem!

The intrinsic randomness of QM measurements ( the probabilistic aspect of QM, that you don’t like) reassures that relativistic Causality is respected.

The correlations that QM predicts are becoming apparent when we compare the experimental results after the experiment is done, in the future. The statistics confirm the predictions of the theory all the time..

Some people don’t like this stochastic aspect and want to insert hidden variables, like in pilot wave theories. These kind of alternatives have indeed problems with relativistic locality, not QM , so don’t believe the hype!

This notion of locality is, perhaps, an approximation in quantum gravity and people speculate that it will be replaced by something else in a deeper theory.

But that’s a different story, that has nothing to do with the usual misconceptions!

General Relativity (and classical physics) generically respect some notion of determinism, but they are not “superdeterminist”. Classical theories admit various initial conditions, they’re not restricted to only some specific sets ( of measure zero)! That’s a huge difference!

In the superdeterminist paper that I mentioned, a preferred reference frame is assumed ( just like in Bohmian mechanics..), besides the other issues that have to do with transmission of physical signals from the measurements ( detection devices) back to the past ( to the prepared state that is the “source”).

So, again it seems that CTCs are all over the place in such models and that implies also Cauchy horizons with instability issues, violation of energy conditions and , once again.. precise fine tuning!

LikeLike

Dimitris Papadimitriou,

„The “no-signaling theorem” forbids superluminal communication in standard QM (check it up). In an EPR like experiment, Alice can manipulate her device (e.g. adjust the angle) but she has no way to predict the result of each individual measurement outcome , so she can’t encode a message to Bob using quantum mechanical entanglement. It’s not merely a practical, “engineering” problem!”

Yes, I agree with all this but the impossibility of superluminal communication is not the same thing as the impossibility of superluminal causation, which is what you need for consistency with SR.

As you say, the reason why A and B cannot communicate (which means sending a meaningful message) is because the measurement outcomes are unpredictable. Let’s say that in an EPR experiment A gets 001010111. If spin 1 particles are used, B is guaranteed to also get 001010111. The question is now, how exactly did B get the same result? You have two logical options:

The spins were predetermined from the time of emission (hidden variables/superdeterminism)

A „created” the string „001010111” by a genuinely random process, as Copenhagen says, and this string is instantly send to B.

As far as I can tell you choose 2, but notice that A cannot control the string. So A cannot engage in a meaningful communication with B in this way. My question is „who cares?”.

What SR forbids is that if A and B measurements are spacelike, A cannot cause B directly and B cannot cause A. But your option 2 contradicts this. A DID cause B. A superluminal signal has been sent from A to B. The excuse that the signal is not of any practical use is irrelevant. It’s a red-herring devised to hide the failure of non-determinism.

„The intrinsic randomness of QM measurements ( the probabilistic aspect of QM, that you don’t like) reassures that relativistic Causality is respected.”

On the contrary, relativistic causality is not respected. In the above example a spacelike event (A measurement) caused the specific outcome, „001010111” at B.

„The correlations that QM predicts are becoming apparent when we compare the experimental results after the experiment is done, in the future.”

Indeed, but by logical reasoning we can conclude that, unless hidden variables are introduced, those correlations point to a superluminal signal being sent.

„Some people don’t like this stochastic aspect and want to insert hidden variables…”

It’s not about what one likes or dislikes. The stochastic aspect, when applied to the EPR setup necessarily leads to a conflict with SR. Given the huge amount of evidence we have for SR and the lack of conclusive evidence for any genuinely stochastic process, it is perfectly reasonable to reject the indeterministic interpretations.

„General Relativity (and classical physics) generically respect some notion of determinism, but they are not “superdeterminist”.”

If we stick to the minimalist requirement for superdeterminism, that the state of the detectors and the state of the source (which determines the hidden variables) are not independent, we need to conclude that GR is indeed superdeterministic. One cannot change the mass distribution of the detector (by rotating it) without a suitable adjustment of the gravitational field at the source. Imposing independence leads to a failure to satisfy the theories’ equations.

„Classical theories admit various initial conditions, they’re not restricted to only some specific sets ( of measure zero)! That’s a huge difference!”

I have no idea why you insist that superdeterminism has anything to do with the initial conditions. The failure of the independence assumption is associated with the constraints we need to impose for the global system (source+detectors), that the equations of the theory are satisfied. This is true for any initial state. In electromagnetism, the requirement that the global system satisfies Maxwell’s equations is the reason for the failure of independence. Likewise, in GR we have the requirement that Einstein’s equations are satisfied globally. This is also true for any initial state.

„In the superdeterminist paper that I mentioned, a preferred reference frame is assumed ( just like in Bohmian mechanics..), besides the other issues that have to do with transmission of physical signals from the measurements ( detection devices) back to the past ( to the prepared state that is the “source”).”

To be honest, I’m not impressed by that paper and I am not prepare to defend it. At page 8 they say:

„An often-raised question is how the hidden variables at P already “know” the future detector settings at D1 and D2. As for any scientific theory, we make assumptions to explain data. The assumptions of a theory are never explained within the theory itself (if they were, we wouldn’t need them). Their scientific justification is that they allow one to make correct predictions. The question how the hidden variables “know” something about the detector setting makes equally little sense as asking in quantum mechanics how one particle in a singlet state “knows” what happens to the other particle. In both cases that’s just how the theory works.”

This is certainly frustrating. A model should explain, at least qualitatively what’s going on. So, here is my take on how P „knows” about D1 and D2.

Before the emission the source „knows” the state of the detectors in the past from the electric and magnetic fields associated with the electrons and nuclei in the detectors at the location of the source. The fields are given by the equations here (21.1):

https://www.feynmanlectures.caltech.edu/II_21.html

These fields will determine how the electron accelerates by Lorentz force law, so they will determine the hidden variable (polarisation of the emitted waves). In other words, the hidden variable is correlated with the past states of the detectors. But the future states of the detectors are also correlated with their past states, since the theory is deterministic. So, in the end, the hidden variable gets correlated with the future detector states. You can see that I didn’t mention anything about any special initial state. It does not matter. There is also no non-locality involved.

LikeLike

Andrei

Many of your arguments are based on fundamental misconceptions ( that I have already addressed):

– In an EPR experiment, if Alice and Bob are “spacelike separated”, there’s no way, in principle, to specify which measurement comes first.

In other words, it’s a meaningless statement to say that it was Alice’s measurement (e.g.) that firstly “caused” the collapse (or anything you like..) of the wavefunction, or determined the outcome of the other experiment!

Part of the subtlety is that such a temporal order between A and B requires an absolute reference frame and that’s not compatible with Relativity!

-The meaning of causality in (both Special and General) Relativity is, briefly, the following:

Only between events A and B that are causally (i.e. timelike or lightlike ) connected we can have a definite temporal order. Only in such cases we can have transmission of signals ( Causal influence ) between A and B and Only In one direction: if A is in the past light cone of B then the emission is from A to B, not the opposite.

Roughly speaking, in Standard QM , entangled particles in an EPR experiment are described by the same wavefunction, so they are not ” independent things” with pre-defined properties, before measurements. This is the famous ‘non separability’, the “holistic” aspect of QM.

-There is indeed something subtle, even mysterious, here. There is No causal influence, in the Relativistic sense, between A&B:

The impossibility of superluminal communication is fundamental in the standard theory, it is Not a practical problem, as I already clearly said before..

– But this non separability implies this peculiar kind of non locality ( the correlations that are predicted by QM and confirmed by experiments ) that cannot be explained classically.

– We have to be careful, though: “QM non locality” is Not the same thing as “violation of Relativistic locality/ Causality”. The latter ( if it was realised) would have way more dramatic consequences for physics (and our world ).

Note:

There is much confusion in many people about topics that have to do with QM ( especially entanglement) and S/G Relativity (mostly about black holes but not only..).

The origin of this confusion has to do, partially, with the low quality science popularization. Articles and videos that have serious problems with misconceptions of even basic textbook physics (sometimes), or with oversimplifications , hype, clickbaits and the like..

There are exceptions , but they’re rare..

Unfortunately, there is no sign that the situation will be improved any time soon.

LikeLike

Dimitris Papadimitriou,

„Many of your arguments are based on fundamental misconceptions ( that I have already addressed)”

I would very much like you to provide a quote from what I said, followed by your rebuttal, since I don’t think you addressed any of my arguments.

„In an EPR experiment, if Alice and Bob are “spacelike separated”, there’s no way, in principle, to specify which measurement comes first.”

Exactly, this was my point all along. Relativity does not allow those measurements to be causally connected.

I’ll post again my main argument:

Consider the EPR-Bohm setup (with spins) and detectors fixed on Z. You measure A and get spin-UP.

We have:

P1: QM tells you that the state of B is now spin-DOWN.

Introduce the locality condition:

P2: the A measurement does not change the state of B.

So, it logically follows that:

C: the state of B before the A measurement had to be spin-DOWN as well (otherwise it changed).

So, you have 2 choices here:

Choice 1: reject P2, so that A measurement changes the state of B, or

Choice 2: accept P2, so the conclusion follows, the state of B before the A measurement had to be spin-DOWN.

You cannot have a third choice, because of logic (law of excluded middle).

Now you can see you have a problem. You reject both choices. You reject choice 1:

„There is No causal influence, in the Relativistic sense, between A&B”

You also reject choice 2:

„entangled particles in an EPR experiment are described by the same wavefunction, so they are not ” independent things” with pre-defined properties, before measurements.”

So, your position has been falsified. There is simply no logically coherent position compatible with all your claims, and words like „non separability”, „holistic”, „subtle”, „mysterious”, „peculiar”, „QM non locality” cannot change that.

„…correlations that are predicted by QM and confirmed by experiments that cannot be explained classically.

I think I’ve provided you with a classical explanation of those correlations in my previous post, based on the EM fields associated with the detectors. You did not address that.

LikeLike

In QM, the “collapse of the wavefunction” is not an objective physical process that happens everywhere instantaneously. In EPR experiments, there is no absolute temporal order between measurements that are causally separated.

From Alice’s perspective, the collapse “happens” when her device gives a definite outcome. But from Bob’s perspective , it is his measurement the one that does the update.

Of course one can imagine a reference frame in which A & B events are simultaneous, for example, but there are other frames ( for example labs that are moving with constant velocity in a direction from A to B or the reverse) where a measurement event happens first. If special relativity holds, all these reference frames are equally valid.

The so called “spooky action ” has nothing to do with causal influence from any of the measurement events to the other..

The collapse from a measurement in QM is only “relational”, it is “observer dependent” ( observer means anything that acts as a measuring device with any kind of record). This (along with the stochastic element of QM ) is essential for making QM compatible with Special Relativity.

QM was originally formulated in a Newtonian background, so people could pretend that there is a global notion of simultaneity and that the “collapse of the wavefunction” is instantaneous.

But it can be reconciled with, at least, special relativity in a Minkowskian background: both the existence and the tremendous success of QFTs are convincing evidence for that.

There are other approaches or alternatives ( like pilot wave theories) that do have problems with Lorentz invariance.

Having said all that, QM has indeed strange aspects, with these correlations that ignore temporal order or spatial distance and the measurement problem.

But it is compatible with relativistic locality/ causality, that’s exactly what I’m saying in all my comments.

Perhaps there is a deeper explanation, that has to do with the fundamental nature of spacetime (or something else, this is a speculative area) but I don’t know if there is, or what is..

LikeLike

Andrei

I haven’t done any further comments about various superdeterminist proposals, because I think that it’s clear what are the ( insurmountable) problems with all of them:

The statistical dependence ( in other words: the cooking of the experimental results in such a way that they reproduce something that looks exactly like QM/ entanglement, but it is “really” not; it’s only “effective”) requires one of the following:

1) Either you need violation of the causal structure (transmission of signals to the past light cones ) with all the consequences.. ( no compatibility with either Special or General Relativity).

2) or you need CTCs that have to be all over the place, with the associated unstable Cauchy horizons, the violation of energy conditions , precisely fine tuned boundary conditions, non globally hyperbolic spacetimes, so loss of predictability in the strong sense..

3) or, if you insist on globally hyperbolic spacetimes, you need infinitely fine tuned initial conditions.

I have seen several weak arguments about that issue, that all miss the point :

-Unlike what happens with classical chaos, where small perturbations can lead to entirely different classical histories, due to the sensitive dependence on the initial conditions, (but all these possible histories are obeying the same “physics”), in the case of superdeterminism, random perturbations will, generically, destroy or alter exactly these correlations that are necessary for the model to mimic the QM!

So, a perturbed superdeterminist model , generically, will not have the same “effective” physics ( it will not mimic entanglement etc. anymore!) so it is only a pointless exercise..

LikeLike

Dimitris Papadimitriou,

By mistake I’ve posted my answer below your post from October 22.

LikeLike

Andrei

Draw a horizontal line that represents a section of a Cauchy hypersurface ( where we specify our data).

Above this line ( that can be arbitrarily chosen ) draw the three past light cones ( that intersect this hypersurface) from A (Alice), B ( Bob) and P ( the source), with A&B spacelike separated as usual.

You’ll easily see that A&B past light cones only partially overlap and the past light cone from P “sees” only a portion of the hypersurface ( and the other two light cones).

It cannot be done otherwise! In a realistic cosmological model there are always spacetime regions (which are outside the past light cone of P), that can influence causally A&B.

This is very similar to what happens in real experiments, like that from Zeilinger and his team (2017) when they adjusted the A&B devices using the frequency from random photons from distant stars.

In principle, we can have causal influences ( which are outside the causal past of the source P) that go back as far in the past as we want.

That’s a basic reason for the failure of local hidden variable theories:

Without precise fine tuning of the data on the entire (perhaps spatially infinite) hypersurface, we can have only these correlations that are allowed by the causal structure.

But experiments show stronger correlations than that! And these experiments confirm the predictions of standard QM!

So, whatever you choose ( a globally hyperbolic spacetime or not) you need a miracle for superdeterminism. You need cooked up initial conditions ( with infinite precision) that have to mimic a much simpler theory in all possible situations in vast spatial and temporal distances in a complicated universe..

Saying that this is implausible is a huge understatement.

Perhaps a more accurate phrase is that it’s ” next to impossible”..

The relational aspect of the measurement process in QM is already known from the previous century. That does not mean , though, that we have to abandon any notion of objectivity as many believe..

Yes, it’s meaningless to say that Alice’s ( or Bob’s) measurement “collapsed first the wavefunction”, but when, later ,they compare their records they’ll agree about the correlations, this is objective, there’s no ambiguity.

Other distant far away observers don’t know anything definite yet , though; there’s still a superposition according to them. So, “objectivity” is something that is built up gradually and is restricted by the light cone structure ( in the framework of special relativity). It’s not the naive Block universe “all at once” objectivity.

In the dynamical, time dependent geometry of General Relativity things will not be that simple, though, but that’s already a common secret.

LikeLike

Dimitris Papadimitriou,

„Without precise fine tuning of the data on the entire (perhaps spatially infinite) hypersurface, we can have only these correlations that are allowed by the causal structure.”

I object to your use of the „fine tuning” argument. This is not the first time I ask, but since you never answered, I’ll do it again:

Do you have a theory for the Big-bang itself that tells you how likely/unlikely a certain initial state of the universe is? If you don’t, to say that some initial state is unlikely is meaningless.

We already have a unified theory of GR and electromagnetism, the classical Kaluza-Klein theory. In order for superdeterminism to work I need to assume that the initial state of the universe satisfied the equations of this theory. Then, for any future time, including 2017 when the cosmic Bell test was performed, we know that those equations are satisfied. So, again, do you have any evidence that the initial state did not satisfy those equations?

„You need cooked up initial conditions ( with infinite precision) that have to mimic a much simpler theory in all possible situations in vast spatial and temporal distances in a complicated universe..”

Again I only need the initial state to be a valid physical state for the Kaluza-Klein theory. No other assumptions are necessary. I am not aware of any physicist claiming that the assumption that a past state was a valid state is „a miracle”.

To my knowledge, there is no experiment showing that Maxwell’s equations, or Einstein’s are not satisfied. So, to the extent that we can test, the „miracle” happens. If you claim that those equations failed in the distant past, the burden is on you to provide the required evidence.

QM might be a „much simpler theory” but, as my argument proves, it’s a non-local theory that conflicts with a huge amount of evidence we have for SR. As explained earlier, the excuse that „we can’t use entanglement to communicate” cannot make the theory compatible with SR.

„Yes, it’s meaningless to say that Alice’s ( or Bob’s) measurement “collapsed first the wavefunction”, but when, later ,they compare their records they’ll agree about the correlations, this is objective, there’s no ambiguity.”

Right.

„Other distant far away observers don’t know anything definite yet , though; there’s still a superposition according to them.”

Who cares what those observers knew or didn’t? Their knowledge is completely irrelevant for my argument. The only data the argument depends on is the properly recorded experimental results. It does not matter when, how and why Alice or Bob knew this or that. When the experiment is done and the results are printed and placed on your desk you use my argument to decide what happened. Did the A measurement change the B particle? No, OK, then B particle was in the same state before the measurement. What is that state? A spin eigenstate (spin-DOWN). So, B was always in a defined state of spin (spin-DOWN), the pre-measurement superposition simply reflected our incomplete knowledge regarding the true state. Again, if the particle was not spin-DOWN before the A measurement it means that the A measurement perturbed/changed the state to spin-DOWN, meaning that a signal of some sort traveled instantly from A to B, violating relativity. Clear and simple.

LikeLike

Andrei, since I haven’t heard you make this particular claim before, to stop you here: the original KK unification of GR and E&M is a toy model, it doesn’t work to describe our universe. The pattern of couplings of GR to particles is different than the pattern of the coupling of E&M to particles, which you can’t fix without something more complicated like string theory. You also can’t get the different strengths of E&M and GR correct without having extra dimensions large enough that we would have spotted them in experiments by now.

It’s also totally irrelevant to your argument, because you could have said the same thing about pure E&M and GR without unifying them, just by coupling them the usual way. (And you have made that argument in the past, and I’ve responded to it, and I’m not bothering to get into it here because those discussions were pretty unproductive and I’d rather give full reign to Dimitris here.)

LikeLike

OK, true, I didn’t actually need the unified theory. I don’t remember your response to my argument. I’m usually careful not to repeat claims that were refuted. Can you please point me to that discussion? Thanks!

LikeLike

It’s not really one specific discussion, more that from my point of view the sticking point here is “does stochastic electrodynamics actually work”, which is something we’ve debated on and off unproductively (because I don’t have the time to actually go through the claims and try to cobble something consistent together and see if it works so I just had general arguments why the papers looked fishy, and you don’t have the expertise to do that so you weren’t able to convince me in the other direction).

Dimitris may have a different angle. Dimitris, since it wasn’t clear that you caught on to this: Andrei expects that if you just apply E&M and GR without any exotic physics to a sufficiently realistic model of matter, you’ll get all the observations attributed to QM. He bases this expectation in part on papers various people have written on a (topic/subfield/crackpot idea) called stochastic electrodynamics. I don’t know whether he believes that this happens in general for every valid solution to E&M and GR coupled to (list of particles), or whether he thinks it only applies for a specific class of solutions with very specific boundary conditions. He makes the usual “fine-tuning is fine” arguments that would be made by someone who believes the latter, but he often writes as if he expects the former.

LikeLike

4gravitons,

I think there are two different discussions here.

The first deals with superdeterminism in general. My claim is that classical electromagnetism is a superdeterministic theory, so anyone who claims that superdeterminism involves conspiracies, a denial of scientific method, fine-tuning and so on needs to explain why Maxwell’s theory was not rejected a long time ago for such reasons. This argument holds regardless of the success of classical electromagnetism (in the form of stochastic electrodynamics) to actually reproduce QM. If it fails it’s possible that another classical field theory would succeed.

The second discussion involves a concrete example of a superdeterministic theory, stochastic electrodynamics which, according to peer-reviewed literature was successful in reproducing a lot of so-called quantum results. The theory is quite old, about 80 years and it is still actively pursued by, I think, 3 groups of physicists (Mexico, US and Netherlands). I know of no published rebuttal of the theory. So, while you can call it crackpot or whatever, your evidence is extremely weak.

LikeLike