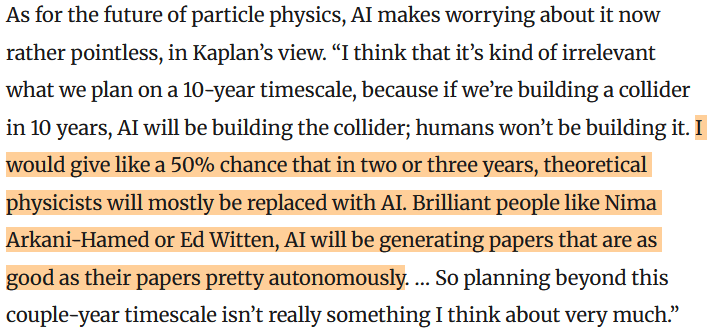

Quanta Magazine recently published a reflection by Natalie Wolchover on the state of fundamental particle physics. The discussion covers a lot of ground, but one particular paragraph has gotten the lion’s share of the attention. Wolchover talked to Jared Kaplan, the ex-theoretical physicist turned co-founder of Anthropic, one of the foremost AI companies today.

Kaplan was one of Nima Arkani-Hamed’s PhD students, which adds an extra little punch.

There’s a lot to contest here. Is AI technology anywhere close to generating papers as good as the top physicists, or is that relegated to the sci-fi future? Does Kaplan really believe this, or is he just hyping up his company?

I don’t have any special insight into those questions, about the technology and Kaplan’s motivations. But I think that, even if we trusted him on the claim that AI could be generating Witten- or Nima-level papers in three years, that doesn’t mean it will replace theoretical physicists. That part of the argument isn’t a claim about the technology, but about society.

So let’s take the technological claims as given, and make them a bit more specific. Since we don’t have any objective way of judging the quality of scientific papers, let’s stick to the subjective. Today, there are a lot of people who get excited when Witten posts a new paper. They enjoy reading them, they find the insights inspiring, they love the clarity of the writing and their tendency to clear up murky ideas. They also find them reliable: the papers very rarely have mistakes, and don’t leave important questions unanswered.

Let’s use that as our baseline, then. Suppose that Anthropic had an AI workflow that could reliably write papers that were just as appealing to physicists as Witten’s papers are, for the same reasons. What happens to physicists?

Witten himself is retired, which for an academic means you do pretty much the same thing you were doing before, but now paid out of things like retirement savings and pension funds, not an institute budget. Nobody is going to fire Witten, there’s no salary to fire him from. And unless he finds these developments intensely depressing and demoralizing (possible, but very much depends on how this is presented), he’s not going to stop writing papers. Witten isn’t getting replaced.

More generally, though, I don’t think this directly results in anyone getting fired, or in universities trimming positions. The people making funding decisions aren’t just sitting on a pot of money, trying to maximize research output. They’ve got money to be spent on hires, and different pools of money to be spent on equipment, and the hires get distributed based on what current researchers at the institutes think is promising. Universities want to hire people who can get grants, to help fund the university, and absent rules about AI personhood, the AIs won’t be applying for grants.

Funding cuts might be argued for based on AI, but that will happen long before AI is performing at the Witten level. We already see this happening in other industries or government agencies, where groups that already want to cut funding are getting think tanks and consultants to write estimates that justify cutting positions, without actually caring whether those estimates are performed carefully enough to justify their conclusions. That can happen now, and doesn’t depend on technological progress.

AI could also replace theoretical physicists in another sense: the physicists themselves might use AI to do most of their work. That’s more plausible, but here adoption still heavily depends on social factors. Will people feel like they are being assessed on whether they can produce these Witten-level papers, and that only those who make them get hired, or funded? Maybe. But it will propagate unevenly, from subfield to subfield. Some areas will make their own rules forbidding AI content, there will be battles and scandals and embarrassments aplenty. It won’t be a single switch, the technology alone setting the timeline.

Finally, AI could replace theoretical physicists in another way, by people outside of academia filling the field so much that theoretical physicists have nothing more that they want to do. Some non-physicists are very passionate about physics, and some of those people have a lot of money. I’ve done writing work for one such person, whose foundation is now attempting to build an AI Physicist. If these AI Physicists get to Witten-level quality, they might start writing compelling paper after compelling paper. Those papers, though, will due to their origins be specialized. Much as philanthropists mostly fund the subfields they’ve heard of, philanthropist-funded AI will mostly target topics the people running the AI have heard are important. Much like physicists themselves adopting the technology, there will be uneven progress from subfield to subfield, inch by socially-determined inch.

In a hard-to-quantify area like progress in science, that’s all you can hope for. I suspect Kaplan got a bit of a distorted picture of how progress and merit work in theoretical physics. He studied with Nima Arkani-Hamed, who is undeniably exceptionally brilliant but also undeniably exceptionally charismatic. It must feel to a student of Nima’s that academia simply hires the best people, that it does whatever it takes to accomplish the obviously best research. But the best research is not obvious.

I think some of these people imagine a more direct replacement process, not arranged by topic and tastes, but by goals. They picture AI sweeping in and doing what theoretical physics was always “meant to do”: solve quantum gravity, and proceed to shower us with teleporters and antigravity machines. I don’t think there’s any reason to expect that to happen. If you just asked a machine to come up with the most useful model of the universe for a near-term goal, then in all likelihood it wouldn’t consider theoretical high-energy physics at all. If you see your AI as a tool to navigate between utopia and dystopia, theoretical physics might matter at some point: when your AI has devoured the inner solar system, is about to spread beyond the few light-minutes when it can signal itself in real-time, and has to commit to a strategy. But as long as the inner solar system remains un-devoured, I don’t think you’ll see an obviously successful theory of fundamental physics.

“If you see your AI as a tool to navigate between utopia and dystopia, theoretical physics might matter at some point: when your AI has devoured the inner solar system, is about to spread beyond the few light-minutes when it can signal itself in real-time, and has to commit to a strategy. But as long as the inner solar system remains un-devoured, I don’t think you’ll see an obviously successful theory of fundamental physics.”

Could you explain that further? I’m having difficulty understanding it.

LikeLiked by 1 person

Sure. I think I hid a few assumptions there, it’s worth unpacking.

By “obviously successful” in that last sentence, I mean a theory with technological implications, where you can tell which theory is right because it’s the theory that you have to use to accomplish things every day. Quantum mechanics meets that bar with for example transistors, general relativity does with its implications for GPS satellites. Without that, a theory can be well-confirmed, but it won’t be “obvious” in the same way.

For a new theory of fundamental physics to be obviously successful in that way, it would have to have practical implications, and that means we’d have to be doing something for which a theory like that matters. And the problem is that a new theory of fundamental physics, one that goes deeper than the Standard Model, is a theory that matters only at very high energies. It can matter if we’re routinely doing things that involve those energies, which would mean living in a world in which colliders are not exorbitantly expensive, or if we’re making plans for interacting with natural phenomena with those kinds of energies, like black holes or the expansion of the universe.

Thus, these things don’t really matter in that way unless we can harness enormous amounts of energy or we’re planning to travel across enormous distances in space. That could matter for an AI, if you’re really really optimistic about where AI is going and think it will solve a huge number of technological problems and totally reshape the world, ushering in a utopia or dystopia. But even then, it’s only going to matter when the AI itself has access to huge amounts of energy or is planning on traveling huge distances in space, and whether or not the AI has done something to dramatically transform (“devour”) the inner solar system seems like the first relevant bar there. If you’re expanding beyond that point, then you stop being able to easily exchange messages at the speed of light, and you need to have a clearer idea in advance of what you’re doing. If not, you can always wait to figure out fundamental physics until it actually matters.

LikeLike

I note that no one comments on the first part of Jared Kaplan’s statement – that in 10 years, AI will also be building the colliders (presumably via robots).

Kaplan must be speaking from a worldview akin to the old idea of a “technological singularity”, in which superhuman intelligence comes pouring out of Pandora’s box and changes everything with extreme rapidity, to the point that humans are either obsolete or in a symbiosis with AI partners, in every sphere of activity.

This view makes a lot of sense to me, but it’s naturally apocalyptic and science-fictional. Superintelligence emerges in a data center somewhere, and within a week it has conquered the whole cloud, and the skies are darkening with nanobots that will dismantle the planet – that kind of scenario.

In a way, the higher end of AI safety is concerned with aiming for a version of that scenario, in which the same titanic forces have been unleashed, but it still manages to be human-friendly. At the level of propaganda, this means vague pastoral visions of humans living in utopian space habitats where they get to live like Greek gods in a Star Trek universe, and so forth, with a benevolent superintelligence in the background as infrastructure.

I suppose this is materially possible, but to me it seems a bit unlikely, even if we succeed in “aligning” superintelligent AI to “human values”. I suspect that even in that case, existence ends up being transformed, and us along with it, into something that no human ever foresaw, but which in retrospect would be acceptable to an ex-human being.

Sorry if that sounds insane, but it’s apparent that technology unleashed is replete with possibilities of life and mind, far beyond those that pre-technological nature ever managed to devise. At the edge, the AI race now involves just blindly reaching for power, with little idea of the consequences, because if you don’t do it, someone else will.

Now let me retreat to something a little more human. In a comment above, you seem to say that a theory of everything wouldn’t have technological consequences until posthuman civilization is dealing with astronomical energies and scales. From an EFT perspective, this is a logical statement, but I wouldn’t have absolute confidence in it, there are various ways in which a UV theory might have actionable consequences in the infrared. The most basic of these might be an ability to predict the constants of the standard model! – which would be an intellectual revolution, if not necessarily a technological revolution. Looking beyond that, there are various ways in which e.g. exotic new stable states of matter might show up; if they come from the dark sector, they might already be floating around somewhere nearby.

LikeLike

Someone on (bluesky or X, don’t remember) did question the first part when I posted this. I think you can parse it in the same sense as “physicists will build the collider”, by hiring contractors etc. But of course he could easily mean the robots thing, it wouldn’t be out of character.

Regarding usefulness of ToEs and friends: Intellectual revolutions are of course cool, but not exactly priorities for achieving other goals: there are much cheaper ways to make people feel enlightened. I’m pretty skeptical that there are useful applications of the dark sector available at our technological level, since you’d have to manipulate and interact with them gravitationally, so unless they’re a lot denser than normal matter they’d be less useful technologically for that purpose. (Do you have something concrete in mind there?) And yeah, more generally, there’s the eternal “we could be wrong”. There might be some sort of UV-IR mixing nobody anticipated, Sabine Hossenfelder might figure out how to deterministically predict the outcomes of quantum experiments. But I don’t see any reason to expect anything like that, or even think it’s likely.

LikeLike

The most unexpected technological use for BSM physics that I heard recently, came from Michael Peskin during the Professor Dave video in which Peskin and five others were tag-teaming against Sabine. Peskin’s idea was that something akin to the Callen-Rubakov effect might be used to efficiently turn matter into antimatter, thereby providing a cheap source of antimatter fuel. That strikes me as something that could involve dark sector physics, if the dark sector happens to take the right form.

LikeLike

Eh…

I have no idea what he had in mind, haven’t seen the video. But I think that one thing you might be missing here is that the “intensity frontier” is also a frontier. Some things aren’t gated by energy, yes, but gathering and manipulating particles that interact extremely weakly is itself something that costs a lot to do.

In a sense, the beginning of nuclear physics was an intensity frontier thing, and at the time the intensity frontier was within reach on an industrial scale, with centrifuges and the like. Now, dark matter experiments are huge, and elsewhere in the intensity frontier we’ve instrumented kilometers of Antarctic ice. There isn’t really room for something we can gather and use on an industrial scale that hasn’t been discovered yet.

LikeLike

It seems to me that progress in science is primarily dependent on new information and knowledge, not simply recycling and reworking existing knowledge. Would the Theory of Relativity have made sense at the time without the Michelson–Morley experiment?

Of course, AI could tease out patterns in existing data to which we are oblivious, but a lot of new knowledge requires complicated and expensive things, like colliders and space telescopes, and time and effort to build them.

LikeLike

There’s a good chance you’re already in the loop, but would you be interested in writing a post on the recent paper on gluon amplitudes where GPT-5.2 conjectured and then proved the validity of a formula for gluon amplitudes in the half-collinear regime? I would love to get your perspective. https://arxiv.org/abs/2602.12176

LikeLike

Heh, someone else asked me about this on this post, and I said they ought to have asked here instead!

You can check that comment thread for some initial thoughts. I’ll also be saying a bit more in next week’s post. I will probably do more of a deep dive at some point, but I’m still figuring out whether I’m going to get paid to do it elsewhere vs. doing it here for free.

LikeLike